Typelogical Grammar

Typelogical grammars are substructural logics, designed for reasoning about the composition of form and meaning in natural language. At the core of these grammars are residuated families of type-forming operations; a hierarchy of typelogical grammars results from the choices one makes with respect to the structural properties of the type-forming operations, and the means one introduces to control the grammatical resource management. Computational semantics is obtained from the Curry-Howard interpretation of categorial derivations. Parsing and natural language processing is modeled in terms of suitably refined versions of the proof nets of linear logic.

- 1. A bit of history

- 2. The Lambek systems

- 3. Extended typelogical grammars

- 4. Proof nets and processing

- 5. Recognizing capacity, complexity

- 6. Related approaches

- Bibliography

- Academic Tools

- Other Internet Resources

- Related Entries

1. A bit of history

Typelogical grammar has its roots in two seminal papers written by Jim Lambek half a century ago (Lambek 1958, 1961). In these papers, the author set himself the aim “to obtain an effective rule (or algorithm) for distinguishing sentences from non-sentences, which works not only for the formal languages of interest to the mathematical logician, but also for natural languages […]”. To realize this goal, the familiar parts of speech (nouns, verbs, …) are turned into formulas of a logic — a logic designed to reason about grammatical composition. The judgement whether a phrase is wellformed, under this view, is the outcome of a process of deduction. A decision procedure for such judgements is obtained by casting the grammar logic in the format of a Gentzen-style sequent calculus. The sequent presentation is extremely simple: the logic of grammar is a logic without structural rules. Contraction and Weakening are dropped; their presence would entail that wellformedness is unaffected by arbitrary copying or deletion of grammatical material. To take into account also word order and phrase structure information, Lambek further removes the structural rules of Exchange and (in the 1961 paper) Associativity.

At the time of their publication, these ideas did not resonate; their impact on the field of computational linguistics dates from the 1980s. Two factors have played an important role in this belated recognition. The first was the addition of a computational semantics for categorial derivations along the lines of the Curry-Howard proofs-as-programs interpretation in van Benthem 1983, reprinted in Buszkowski et al. 1988. Lambek's typelogical grammars, so viewed, could be seen to realize Montague’s compositionality program in an uncompromising way, with the lambda calculus and type theory providing powerful tools to study derivational and lexical semantics. The second factor was the introduction of linear logic (Girard 1987), and with it, the surge of interest in substructural logics. A key insight from linear logic injected into typelogical grammar is the idea that structural rules can be reintroduced in a controlled form by means of so-called modalities: in moving to more discriminating type logics, no expressivity is lost. From a computational point of view, variants of the proof nets of linear logic have provided the basis for typelogical natural language processing.

Typelogical grammar, in its current incarnations, keeps the general architecture of Lambek's original calculi, but extends this to a more articulate vocabulary of type-forming operations. Of central importance are the multiplicative operations, used to model grammatical composition; these form the focus of this article. Next to the multiplicatives one can consider additive or Boolean operations and first or second order quantification to handle phenomena of lexical ambiguity, type polymorphism and the management of feature information. Morrill 1994 is a good source of examples for such extensions.

Outline of this article. We start with the standard Lambek systems. We study their model-theoretic and proof-theoretic aspects (frame semantics, sequent calculus), and the relation between the two (soundness, completeness). We characterize compositional interpretation as a homomorphism relating a syntactic source calculus and a target calculus for meaning assembly. The mapping associates syntactic derivations with semantic readings, expressed as terms of the simply typed linear lambda calculus. In §3 we turn to the expressive limitations of the standard Lambek systems, in syntax and semantics, and study how they can be addressed by systematically extending the categorial architecture in a modular way. Generalizations concern the arity of the type-forming operations (binary composition operations versus unary control operators); multimodal extensions where multiple families of type-forming operations co-exist and communicate via structural interaction principles; one-sorted vs multi-sorted logics (discontinuous calculi); single-conclusion vs multiple-conclusion systems (symmetric calculi); and more structured views of the syntax- semantics interface (continuation semantics). To close the panoramic tour, we present proof nets for the various typelogical systems studied in §3, and we compare these systems with respect to expressivity and computational tractability.

2. The Lambek systems

2.1 Modelling grammatical composition

The type language to be considered in this section consists of a small set of atomic types, and is closed under the binary operations product, left and right division. Some notational conventions: in the specification below, lower case p ranges over atomic types, upper case A, B over arbitrary types. We pronounce A\B as ‘A under B’ and B/A as ‘B over A’ thus to make clear which part of the ‘fraction’ is the denominator (A), and which is the numerator (B).

- Types: A,B ::= p | A ⊗ B | A\B | B/A

In categorizing linguistic expressions, atomic types stand for phrases that one can think of as grammatically ‘complete’. Examples, for English, could be s for declarative sentences (‘Mary dreams’,…), np for noun phrases (‘Mary’, ‘the girl’,…), n for common nouns (‘girl’, ‘smart girl’,…). Division expresses incompleteness: an expression with type A\B (B/A) will produce a phrase of type B when it is put together with a phrase of type A to its left (right). Product types A ⊗ B explicitly express the formation of a complex phrase out of constituent parts of type A and B in that order. So if ‘Mary’ is typed as np and the verb ‘dreams’ as np\s, we obtain s for the combination ‘Mary dreams’ by multiplying out these types: np ⊗ (np\s). Similarly for the combination ‘the girl dreams’, where one would start from a type np/n for the determiner and n for ‘girl’: ((np/n) ⊗ n) ⊗ (np\s).

To make this informal description of the interpretation of the type language precise, we turn to modal logic. Frames F = (W,R), in the categorial setting, consist of a set W of linguistic resources (expressions, ‘signs’), structured in terms of a ternary relation R. This ternary relation stands for grammatical composition, or ‘Merge’ as it is known in the generative tradition: read Rxyz as “the expression x is obtained by putting together the subexpressions y and z”. For comparison: in the Routley-Meyer semantics for relevance logic, R would be the accessibility relation interpreting the fusion operation.

A model M is a pair (F,V) where V is an interpretation (valuation) sending atomic types to subsets of W. For complex types, the valuation respects the clauses below. We write x ∈ V(A) as M,x ⊩ A or, if no confusion will arise, x ⊩ A.

- x ⊩ A ⊗ B iff ∃yz such that Rxyz and y ⊩ A and z ⊩ B

- y ⊩ C/B iff ∀xz, if Rxyz and z ⊩ B, then x ⊩ C

- z ⊩ A\C iff ∀xy, if Rxyz and y ⊩ A, then x ⊩ C

A syntactic calculus for a categorial type language is a deductive system producing statements of the form A ⊢ B (‘B is derivable from A’). A statement A ⊢ B holds in a model (‘M ⊨ A ⊢ B’) if V(A) ⊆ V(B); it is valid (‘⊨ A ⊢ B’) if it holds in all models. For the minimal grammar logic, the set of derivations in the syntactic calculus is freely generated from the axiom and rules of inference below. This minimal system, for historical reasons, is known as NL (for ‘Non-associative Lambek calculus’). The first line states that derivability is a reflexive, transitive relation, i.e., a preorder. The bidirectional (‘if and only if’) inference rules of the second line characterize the product and division operations as a residuated triple as it is known in residuation theory. (Galatos et al. 2007 provides good background reading.) The syntactic calculus, so defined, precisely fits the intended interpretation of the type language, in the sense of the soundness and completeness result below.

- preorder laws:

- A ⊢ A (reflexivity)

- from A ⊢ B and B ⊢ C infer A ⊢ C (transitivity)

- residuation laws: A ⊢ C/B iff A ⊗ B ⊢ C iff B ⊢ A\C

- soundness and completeness: A ⊢ B is provable in the grammatical base logic NL iff ⊨ A ⊢ B, i.e., iff V(A) ⊆ V(B) for every valuation V on every frame F (Došen 1992).

The completeness result for NL does not impose any restrictions on the interpretation of the Merge relation R. This means that the theorems and inference rules of the minimal grammar logic have the status of grammatical invariants: properties of type combination that hold no matter what the structural particularities of individual languages may be. Here are some examples of such universally valid principles. They come in pairs, because of the left-right symmetry relating slash and backslash.

- application:

- (A/B) ⊗ B ⊢ A

- B ⊗ (B\A) ⊢ A

- co-application:

- A ⊢ (A ⊗ B)/B

- A ⊢ B\(B ⊗ A)

- lifting:

- A ⊢ B/(A\B)

- A ⊢ (B/A)\B

- monotonicity: from A ⊢ B and C

⊢ D infer any of the following:

- A ⊗ C ⊢ B ⊗ D

- A/D ⊢ B/C

- D\A ⊢ C\B

Given the universal freely generated syntactic calculus discussed above, the task of providing a categorial grammar for a particular language is reduced to specifying its lexicon: the typelogical framework, in this sense, represents the culminating point of ‘lexicalizing’ grammar formalisms. A categorial lexicon is a relation associating each word with a finite number of types. Given a lexicon Lex, a categorial grammar assigns type B to a string of words w1 ··· wn iff for 1 ≤ i ≤ n, (wi,Ai) ∈ Lex, and X ⊢ B is provable in the syntactic calculus where X is a product formula with yield A1 ··· An. The grammar can be considered adequate if the strings w1 ··· wn are indeed judged to be the wellformed phrases of type B.

Lexical type assignments don't have to be handcrafted: Buszkowski and Penn (1990) model the acquisition of the lexicon as a process of solving type equations. Their unification-based algorithms take function-argument structures as input (binary trees with a distinguised head daughter); one obtains variations depending on whether the solution should assign a unique type to every vocabulary item (so-called rigid grammars), or whether one accepts multiple assignments (k-valued grammars, with k as the maximal lexical ambiguity factor). Kanazawa (1998) studies learnable classes of grammars from this perspective, in the sense of Gold’s notion of identifiability ‘in the limit’. This line of research is by now well established, for typelogical grammars proper, and for the related pregroup formalism discussed under §6.

The radical lexicalism of typelogical grammar means there is no room for construction specific rule stipulations: central grammatical notions, rather than being postulated, must emerge from the type structure. Here are some examples.

- Valency. Intransitive verbs (‘dreams’, ‘sleeps’) form a complete sentence with just a subject noun phrase to their left; transitive verbs (‘sees’, ‘admires’) require both a subject to their left and a direct object to their right. Such selectional requirements are expressed in terms of the directional implications: np\s for intransitive, (np\s)/np for transitive verbs. In a context-free grammar, these distinctions require the postulation of new non-terminals.

- Case. The distinction between phrases that can fulfill any noun phrase selectional requirement (say, proper names) versus case-marked pronouns insisting on playing the subject or direct object role is expressed through higher-order type assignment: proper names can be typed simply as np, subject pronouns (‘he’, ‘she’) as s/(np\s), direct object pronouns (and reflexives) as ((np\s)/np)\(np\s). Under such typing, one correctly distinguishes between the grammatical ‘he sees her’ versus the ill-formed ‘him likes she’.

- Complements vs modifiers. Compare exocentric types (A/B or B\A with A ≠ B) versus endocentric types A/A, A\A. The latter express modification; optionality of modifier phrases follows. Compare ‘the girl’, ‘the smart girl’, ‘the girl (who teases Bill)’, with ‘girl’ typed as n, ‘smart’ as n/n and the relative clause ‘who teases Bill’ as n\n.

- Filler-gap dependencies. Types with nested implications A/(C/B), A/(B\C), etc., signal the withdrawal of a gap hypothesis of type B in a domain of type C. The relative pronoun ‘who’ in ‘the girl who teases Bill’ is typed as (n\n)/(np\s): it combines with a sentence of which the subject must remain unexpressed, as one sees from the ill-formed ‘the girl who Mary teases Bill’.

Sequent calculus, decidability. How can we decide whether a statement A ⊢ B is indeed a theorem of the syntactic calculus? In the presence of expanding rules, such as Lifting, this is not immediately obvious: the transitivity inference from A ⊢ B and B ⊢ C to A ⊢ C involves a ‘lemma’ formula B which disappears from the conclusion, and there is an infinite number of candidate lemma formulas to consider. A key point of Lambek's original papers consists in the reformulation of the syntactic calculus in an equivalent Gentzen-style sequent format, which enjoys cut-elimination.

Sequents for the grammatical base logic NL are statements X ⇒ A, where X (the antecedent) is a structure, and A (the succedent) a type formula. The antecedent structure is required to be non-empty. A structure is either a single formula, or a binary branching tree with formulas at the leaves. In the sequent rules below, the tree structure of the antecedent is indicated by bracketing (–,–). The notation X[Y] refers to a structure X containing a distinguished substructure Y. For every connective (type-forming operation), there are two inference rules, introducing the connective to the left or to the right of the derivability arrow. In this sequent presentation, as in Gentzen's propositional logics, Cut is an admissible rule: every proof of a theorem that makes use of Cut inferences can be transformed into an equivalent cut-free derivation. Backward-chaining proof search in the cut-free system respects the subformula property and immediately yields a decision procedure.

NL: Sequent Calculus

A⇒A Ax

Y⇒A X[A]⇒B X[Y]⇒B Cut

X⇒A Y⇒B (X,Y)⇒A⊗B (⊗R)

X[(A,B)]⇒C X[A⊗B]⇒C (⊗L)

Y⇒B X[A]⇒C X[(Y,B\A)]⇒C (\L)

(B,X)⇒A X⇒B\A (\R)

Y⇒B X[A]⇒C X[(A/B,Y)]⇒C (/L)

(X,B)⇒A X⇒A/B (/R)

A landscape of calculi. From the pure residuation logic, one obtains a hierarchy of calculi by attributing associativity and/or commutativity properties to the composition operation ⊗. These calculi reflect different views on how the grammatical material is structured. The table below summarizes the options.

logic structure associative commutative NL tree – – L string ✓ – NLP mobile – ✓ LP (=MILL) multiset ✓ ✓

For reasons of historical precedence, the system of Lambek 1958, with an associative composition operation, is referred to as L; the pure residuation logic NL, i.e., the non-associative version of L, was introduced later in Lambek 1961. Addition of commutativity turns L and NL into LP and NLP, respectively. LP, Lambek calculus with Permutation, was introduced in van Benthem 1983; in retrospect, this the implication/fusion fragment of Multiplicative Intuitionistic Linear Logic (MILL). The commutative variant of NL has been studied by Kandulski; it has not found significant linguistic applications. For the four systems in the hierarchy, one writes (N)L(P)* for the (non-conservative) extension which allows empty antecedents.

In the presentation of the syntactic calculus, the structural options take the form of non-logical axioms (postulates), which one adds to the residuation laws. In the sequent presentation, one has corresponding structural rules. The sequent calculus for L(P) also allows for a sugared presentation where the antecedent is treated as a list or a multiset of formulas; the inference steps for the structural rules can then be left implicit. With respect to the frame semantics, each of the structural options introduces a constraint on the interpretation of the Merge relation R⊗. Completeness for the calculi with structural options is then with respect to all frames respecting the relevant constraints (Došen 1992). The associative calculus L in fact allows more specific models where we read Rxyz as x = y · z, with · a binary concatenation operation. Pentus (1995) presents a quite intricate proof that L is indeed complete with respect to such free semigroup models (or language models, as they are also called).

- structural postulates:

- A ⊗ B ⊢ B ⊗ A (commutativity)

- A ⊗ (B ⊗ C) ≡ (A ⊗ B) ⊗ C (associativity)

- corresponding structural rules for the sequent presentation:

W[(Y,X)]⇒C W[(X,Y)]⇒C (comm.) W[((X,Y),Z)]⇒C W[(X,(Y,Z))]⇒C (assoc.) - frame constraint for commutativity: ∀xyz(Rxyz implies Rxzy)

- frame constraint for associativity (rebracketing to the left): ∀xyzrs(Rrxs and Rsyz imply ∃t(Rrtz and Rtxy)); similarly for the other direction

A characteristic property of LP is the collapse of the distinction between left and right division: A\B and B/A become interderivable. From a syntactic point of view, the global availability of associativity and/or commutativity in LP would entail that arbitrary changes in constituent structure and/or word order cannot affect the well-formedness of an expression — a position that few linguists will subscribe to. Whether global associativity as we find it in L is a viable option for syntax is open to dispute. Lambek points to cases of overgeneration resulting from free rebracketing in his 1961 paper, but later reverts to the associative view. In the following section, we will see that LP is perfectly legitimate as a calculus of meaning composition. In §3, we discuss controlled forms of structural reasoning, anchored in lexical type assignment, as an alternative to the problematic all-or-nothing options.

2.2 The syntax-semantics interface

In order to give equal weight to syntactic and semantic considerations, we can set up a categorial grammar as a pair of calculi linked by a compositional interpretation: a syntactic source calculus, a semantic target calculus, and a homomorphism mapping types and derivations of the source to the corresponding types and derivations of the target. In this section, we work out this architecture for the standard Lambek calculi. In the following section, we will see how the extended categorial type logics reflect different views on the division of labour between syntax and semantics, and hence on the amount of work that has to be done to achieve a compositional mapping between the two.

(N)L{n,np,s}/,\ (·)′ LP{e,t}→ Syntax homomorphism Semantics

The semantic study of categorial deduction started with van Benthem 1983. In line with the Curry-Howard ‘formulas-as-types, proofs-as-programs’ method, derivations in the various categorial calculi are associated with terms of suitable fragments of the lambda calculus. For the sake of simplicity, we restrict attention to the implicational vocabulary: the directional slashes for (N)L, and the non-directional implication of LP.

Let us start with the Curry-Howard correspondence for LP. The choice of LP as the calculus of natural language semantics captures the fact that meaning composition is a resource-sensitive process: in interpreting a derivation, no material can be copied or thrown away. Let us refer to the terms of the simply typed linear lambda calculus as Λ(Lin). In the absence of Contraction and Weakening, this is the smallest set such that, given a denumerably infinite set of variables Var,

- x ∈ Λ(Lin) if x ∈ Var;

- λx.M ∈ Λ(Lin) if x ∈ Var, M ∈ Λ(Lin), and there is exactly one free variable occurrence of x in M;

- (M N) ∈ Λ(Lin) if M ∈ Λ(Lin), N ∈ Λ(Lin), and the sets of free variables of M and N are disjoint.

From Λ(Lin), one obtains Λ(LP), the terms in Curry-Howard correspondence with LP derivations, by adding one extra restriction, reflecting the requirement that sequent antecedents must be non-empty: every subterm contains at least one free variable. The derivations of LP are now read as judgements of a type inference system for Λ(LP). Derivations are given in the natural deduction style, with elimination and introduction rules for the linear implication →. Judgements then are sequents of the form Γ ⊢ M : B. Γ is a non-empty multiset of typing declarations x1 : A1, …, xn : An; the Ai and B are linear implicative types, and M is a Λ(LP) term of type B built out of the xi of type Ai. Axiom sequents are of the form x : A ⊢ x : A. Elimination and Introduction rules for the linear implication are given below. In the Introduction rule, x ∉ dom(Γ); in the Elimination rule dom(Γ) ∩ dom(Δ) = ∅.

Γ,x : A ⊢ M : B Γ ⊢ λx.M : A → B →I

Γ ⊢ M : A → B Δ ⊢ N : A Γ,Δ ⊢ (M N) : B →E

The syntactic calculi (N)L, similarly, are in Curry-Howard correspondence with directional linear lambda terms (Wansing 1992). There is no standard notation. In the directional typing rules below, we use λr vs λl for the image of the slash and backslash Introduction rules; in the case of the Elimination rules, we have right and left function application, with the triangle pointing to the function. The antecedent is now a (bracketed) string of typing declarations xi : Ai.

(Γ,x : A) ⊢ M : B Γ ⊢ λrx.M : B/A I/

(x : A,Γ) ⊢ M : B Γ ⊢ λlx.M : A\B I\

Γ ⊢ M : B/A Δ ⊢ N : A (Γ,Δ) ⊢ (M ⊲ N) : B E/

Γ ⊢ N : A Δ ⊢ M : A\B (Γ,Δ) ⊢ (N ⊳ M) : B E\

The source and target calculi each have their own set of basic types, motivated by syntactic and semantic considerations respectively. At the syntactic end, one could discriminate between sentences s, noun phrases np, common nouns n, for example. At the semantic end, for a simple extensional interpretation, one could introduce two basic types, e and t. Set-theoretic interpretation for the semantic types is then given in terms of a non-empty set of individuals/entities E and the truth values {0,1}. Denotation domains Dom(A) for semantic types A are set up such that Dom(e) = E, Dom(t) = {0,1} and Dom(A\B) = Dom(B/A) is the set of functions from Dom(A) to Dom(B).

Consider next the homomorphic mapping from the syntactic source calculus to the semantic target calculus. The mapping is specified at the level of types and at the level of proofs (terms). Setting up the mapping at the level of atomic types as below, sentences denote truth values; (proper) noun phrases individuals; common nouns functions from individuals to truth values. For complex syntactic types, the interpretation function sends the two directional implications to the linear implication of the target calculus. At the level of proofs (terms), likewise, the directional constructs of the syntactic source calculus are identified in the interpretation. (We use a primed version for the target variable corresponding to a source variable, as a reminder of the fact that the type of these expressions is different.)

- types: (np)′ = e, (s)′ = t, (n)′ = e → t, (A\B)′ = (B/A)′ = A′ → B′

- terms: (x)′ = x′, (λrx.M)′ = (λlx.M)′ = λx′.(M)′, (N ⊳ M)′ = (M ⊲ N)′ = ((M)′ (N)′)

In the previous sections, we have seen that the theorems of NL, L and LP form a proper inclusion hierarchy. The semantic counterpart is that in moving to more discriminating syntactic calculi, more and more LP terms are lost in translation: desirable recipes for meaning assembly are often unobtainable as the image of (N)L proofs. For the three calculi, the table below gives a characteristic type transition. The second row has the directional proof terms associated with the (N)L type transitions; the third row gives the corresponding LP term, obtained via the translation homomorphism, in the case of (N)L, or directly for the LP case.

| NL: argument lowering | L: composition | LP: argument raising |

| (B/(A\B))\C ⊢ A\C | A\B ⊢ (A\C)/(B\C) | A → (B → C) ⊢ ((A → C) → C) → (B → C) |

| z ⊢ λlx.((λry.(x ⊳ y)) ⊳ z) | y ⊢ λrzλlx.((x ⊳ y) ⊳ z) | |

| z′ ⊢ λx′.(z′ λy′.(y′ x′)) | y′ ⊢ λz′λx′.(z′ (y′ x′)) | x ⊢ λwλz.(w λy.((x y) z)) |

Argument lowering is valid in NL, hence also in L, LP. Function composition depends on associativity; it is invalid in NL, valid in L(P). Function composition has been used to build up partial semantic representations, running in parallel with the incremental (left-to-right) processing of a string. Argument raising, finally, is valid only in LP, i.e., this form of meaning assembly cannot be obtained as the image of a derivation in any of the syntactic calculi (N)L. But, as we will see below, this transition plays an important role in the analysis of natural language scope ambiguities.

Derivational versus lexical semantics. The constraints imposed by resource-sensitivity put severe limitations on the expressivity of the derivational semantics. To some extent, these can be overcome at the level of lexical semantics. The Curry-Howard term associated with a derivation, seen as a program for meaning assembly, abstracts from the contribution of particular lexical items. The semantic specification for a lexical item of type A takes the form of a lambda term of the corresponding semantic type A′, but this lambda term is no longer required to be linear. Below some examples of non-linear lexical meaning recipes. The specification for the reflexive pronoun ‘himself’ is a pure combinator (a closed term): it identifies the first and second argument of a binary relation. The terms for the relative pronoun ‘that’ or for the determiners ‘a, some’ have an abstraction that binds two occurrences of a variable, so as to compute the intersection of their two (e → t) arguments (noun and verb phrase), and to test the intersection for non-emptiness in the case of ‘some’.

| NL type | LP type | Lexical recipe | |

| himself | ((np\s)/np)\(np\s) | (e→e→t)→e→t | λRλx.((R x) x) |

| a, some | (s/(np\s))/n | (e→t)→(e→t)→t | λPλQ.(∃ λx.((P x)∧(Q x))) |

| that | (n\n)/(np\s) | (e→t)→(e→t)→e→t | λPλQλx.((P x)∧(Q x))) |

The interplay between lexical and derivational aspects of meaning assembly is illustrated with the example below. Notice that the linear proof term reflects the derivational history (modulo directionality); after substitution of the lexical recipes and β conversion this transparency is lost. The full encapsulation of lexical semantics is one of the strong attractions of the categorial approach.

- string: ‘a boy hurt himself’

- NL derivation: (x : (s/np\s)/n,y : n),(z : ((np\s)/np,w : ((np\s)/np)\(np\s)) ⊢ M : s

- corresponding LP proof term: (M)′ = ((x′ y′) (w′ z′))

- lexical insertion: x′ ↦ λPλQ.(∃ λx.((P x)∧(Q x))),y′ ↦ boy,z′ ↦ hurt,w′ ↦ λRλx.((R x) x)

- final result after β conversion: (∃ λx.((boy x) ∧ (hurt x x)))

3. Extended typelogical grammars

The strategy of combining resource-sensitivity at the level of derivational semantics with non-linear lexical meaning assignments is not a general solution for the expressive limitations of the syntactic calculi originally conceived by Lambek. A type of problem that necessitates extensions of the derivational machinery itself has to do with the inability of (N)L to establish discontinuous dependencies between grammatical resources in a satisfactory way. Such dependencies manifest themselves in two situations.

Extraction. These are phenomena which generative grammar refers to as ‘overt displacement’, as we find it in wh ‘movement’ constructions. Compare the wh phrases ‘what — annoys Mary’ versus ‘what Mary put — there’, as they would occur in a context ‘I know what annoys Mary’ or ‘I know what Mary put there’. The dash marks the spot where a noun phrase would fit in a normal declarative sentence: ‘this record annoys Mary’, ‘Mary put the record there’. Assigning the type wh/(np\s) to ‘what’, we can derive wh for the first example, with a np hypothesis in the left-peripheral subject position. If this hypothesis (the ‘gap’) occurs internally, as in the second example, it is unreachable for (N)L: one cannot derive np\s for ‘Mary — put there’ (nor s/np, for that matter, which would require a right-peripheral gap).

Infixation. Typical examples would be cases of non-local semantic construal, as in ‘Alice knows someone is cheating’, with a wide-scope reading for ‘someone’: ‘there is a particular person x such that Alice thinks x is cheating’. The quantifier phrase ‘someone’ occupies the structural position where in fact the np-type hypothesis is needed, and realizes its semantic effect at some higher sentential level. With a type-assignment s/(np\s) for ‘someone’, there is no derivation producing the non-local reading. In generative grammar, one would call the non-local construal an instance of ‘covert’ movement.

The extensions of the basic calculus to be discussed in the sections below represent the strategies that are being pursued to find principled solutions to these problems of discontinuous dependencies.

3.1 Multimodal systems, structural control

The key idea of Multimodal Categorial Grammars, introduced in the 1990s, is simple: instead of a single family of residuated type-forming operations, one considers multiple families, co-existing in one logic. To discriminate between these families, type-forming operations are decorated with a mode index /i, ⊗i, \i; likewise for the interpreting Merge relations Ri. Each family has the same logical rules (the residuation laws). But they can differ in their structural properties. In particular, they can interact in terms of structural rules that mix different modes. At the level of the interpretation, such interaction principles introduce frame constraints, similar to the constraints that went with the move from NL to L and LP.

For an example, let us return to the case of non-peripheral extraction ‘(what) Mary put — there’. Assume we have two modes of composition: ⊗c and ⊗d. Regular valency requirements are expressed in terms of the slashes for the ⊗c product. Discontinuous dependencies are handled by structural postulates of mixed associativity and commutativity, and an inclusion postulate expressing the relative ‘strength’ of ⊗c and ⊗d. The type-assignment to the pronoun ‘what’ provides access to these postulates, so that it can establish a link with a non-subject np hypothesis, also in a sentence-internal position.

- mixed associativity: (A⊗c B)⊗d C ⊢ A⊗c(B ⊗d C)

- mixed commutativity: (A⊗c B) ⊗d C ⊢ (A ⊗d C) ⊗c B

- inclusion: A ⊗d B ⊢ A ⊗c B

- type for ‘what’ related to a non-subject np hypothesis: wh/c(s/d np)

We can now succesfully demonstrate that the phrase ‘Mary put — there’ is of type s/dnp, as shown in the sketch of a derivation below. By means of the mixed interaction postulates, the np hypothesis finds its way to the non-peripheral position where it is required by the verb ‘put’. Once it has reached its home position, the inclusion step switches from the structurally permissive mode d to the c mode expressing regular subcategorization.

⋮

inclusion

mixed comm.

mixed assoc.

np ⊗c (((((np\cs) /c pp) /c np) ⊗c np) ⊗c pp) ⊢ s np ⊗c (((((np\cs) /c pp) /c np) ⊗d np) ⊗c pp) ⊢ s np ⊗c (((((np\cs) /c pp) /c np) ⊗c np) ⊗d np) ⊢ s (np ⊗c ((((np \c s) /c pp) /c np) ⊗c pp)) ⊗d np) ⊢ s np ⊗c (((np \c s) /c pp) /c np) ⊗c pp) ⊢ s /d np Mary put there

Through multimodal interaction principles, one avoids the overgeneration that would result from global Associativity and/or Commutativity options for a single composition operation ⊗. Undisciplined use of multimodality, however, could easily lead to the introduction of construction-specific mode distinctions, thus compromising one of the main attractions of the typelogical approach. The theory of structural control operators to be discussed below offers a more principled solution to the type of problem multimodal approaches address.

Control operators. Linear Logic, by dropping the structural rules of Contraction and Weakening, splits the conjunction ‘&’ of classical logic in a multiplicative and an additive connective. This move to a resource-sensitive logic can be obtained without sacrificing expressivity: the unary modalities ‘!’,‘?’ allow one to reintroduce Contraction/Weakening in a controlled form and thus to recover classical logic from within the linear setting.

A similar strategy can be followed within typelogical grammar (Moortgat 1996, Kurtonina 1995). The type language is extended with a pair of unary operators which we will write ◊,□; formulas now are built out of atomic formulas p with the unary and binary type-forming operations. As with the binary vocabulary, we start with a minimal logic for ◊,□; facilities for structural control can then be added in the form of extra postulates. The truth conditions below characterize the control operators ◊ and □ as inverse duals with respect to a binary accessibility relation R◊. This interpretation turns them into a residuated pair, just like composition and the left and right division operations.

- Formulas: A,B ::= p | ◊A | □A | A\B | A ⊗ B | A/B

- Interpretation:

- x ⊩◊A iff ∃y(R◊xy and y ⊩ A)

- x ⊩□A iff ∀y(R◊yx implies y ⊩ A)

- Residuation laws: ◊A ⊢ B iff A ⊢ □B

We saw that in the minimal logic NL completeness with respect to the frame semantics for composition and its residuals doesn't impose restrictions on the interpretation of the merge relation R⊗. The same holds for R◊ in the pure residuation logic of ◊,□. From the residuation laws, one easily derives monotonicity of the control operators (A ⊢ B implies ◊A ⊢ ◊B and □A ⊢ □B); their compositions satisfy ◊□A ⊢ A and A ⊢ □◊A. These properties can be put to use in refining lexical type assignment so that selectional dependencies are taken into account. Compare the effect of an assignment A/B versus A/◊□B. The former will produce an expression of type A in composition both with expressions of type B and ◊□B, the latter only with the more specific of these two, ◊□B. An expression typed as □◊B will resist composition with either A/B or A/◊□B. Bernardi and Szabolcsi (2008) present accounts of licensing of polarity-sensitive expressions and scope construal based on (a generalization of) this modalisation strategy.

For sequent presentation of ◊,□, antecedent tree structures now are built out of formulas by means of unary and binary tree-building operations, (–) and (–,–). The residuation pattern then gives rise to the following left and right introduction rules. Cut elimination carries over straightforwardly to the extended system, and with it decidability and the subformula property.

Γ[(A)] ⇒ B Γ[◊A] ⇒ B ◊L

Γ ⇒ A (Γ) ⇒ ◊A ◊R

Γ[A] ⇒ B Γ[(□A)] ⇒ B □L

(Γ) ⇒ A Γ ⇒ □A □R

Controlling structural resource management. Let us turn then to the use of ◊,□ as control devices. The control can take two forms: one is to license access to a structural option that would be overgenerating if globally available; the second is to occasionally block a structural capability of the grammar. For the licensing type of control, compare the multimodal treatment of extraction in terms of a distinction ⊗c vs ⊗d, with the ◊-controlled version below, which relies on a single binary composition operation, and narrows down the ‘movement’ potential entirely to ◊-marked resources.

- ◊-controlled mixed associativity: (A ⊗ B) ⊗ ◊C ⊢ A ⊗ (B ⊗ ◊C)

- ◊-controlled mixed commutativity: (A ⊗ B) ⊗ ◊C ⊢ (A ⊗ ◊C) ⊗ B

- type for ‘what’ related to a non-subject np hypothesis: wh/(s/◊□np)

The derivation sketch below illustrates the interplay between logical and structural reasoning. As long as the gap subformula ◊□np carries the licensing ◊, the structural rules are applicable; as soon as it has found the appropriate structural position where it is selected by the transitive verb, it can be used as a regular np, given the type transition ◊□np ⊢ np which obviates the need for the inclusion postulate of the earlier account. Related analyses of extraction based on the ‘!’ modality of Linear Logic can be found in (Barry et al. 1991), with the dereliction rule !A ⊢ A taking the place of ◊□A ⊢ A for gap introduction.

⋮

since ◊□A ⊢ A

◊ mixed comm.

◊ mixed assoc.

np ⊗ (((((np\s)/pp)/np) ⊗ np) ⊗ pp) ⊢ s np ⊗ (((((np\s)/pp)/np) ⊗ ◊□np) ⊗ pp) ⊢ s np ⊗ (((((np\s)/pp)/np) ⊗ pp) ⊗ ◊□np) ⊢ s (np ⊗ ((((np\s)/pp)/np) ⊗pp)) ⊗ ◊□np ⊢ s np ⊗ ((((np \ s) / pp) / np) ⊗ pp) ⊢ s / ◊□np Mary put there

For the type of control where a structural transformation has to be blocked, we can turn to extraction islands: phrases that do not allow a gap, such as adjuncts. Compare ‘Mary fell asleep during the concert’, with the adjunct phrase ‘during the concert’ of type s\s, with the ill-formed ‘I know what Mary fell asleep during —’. To make the adjunct inaccessible, one can assign ‘during’ the type (□(s\s))/np: for the □ to be eliminated, the whole adjunct phrase has to be enclosed by a structural ◊, which then acts as a barrier for extraction. Without such a barrier, the extraction postulates as formulated above would allow the ill-formed sentence to be derived. This strategy of projecting island barriers from lexical type assignments was introduced by Morrill; see the discussion of ‘structural inhibition’ in Morrill 1994.

The two uses of the control operators illustrated here give rise to a general theory of substructural communication relating the different type logics in the categorial landscape. Let Source and Target be neighbouring logics differing in some structural option, for example NL versus L, or L versus LP. Kurtonina and Moortgat (1997) establish a set of embedding theorems of the following form:

- translation: μ : Source(/,⊗,\) → Target(◊,□,/,⊗,\), such that

- A ⊢ B is derivable in the Source logic iff μ(A) ⊢ μ(B) is derivable in the Target logic

In the case where the Target logic is more discriminating than the Source, the translation implements control of the licensing type. The other direction of communication obtains when the Target system is less discriminating than the Source. The modal decoration effected by the translation in this case blocks the applicability of structural rules.

Frame constraints, term assignment. The frame semantics for the pure residuation logic does not impose restrictions on the interpretation of the R◊ and R⊗ relations. In the case of extended versions with modally controlled structural postulates, there is a frame constraint for each structural postulate, and completeness holds with respect to appropriately restricted frames.

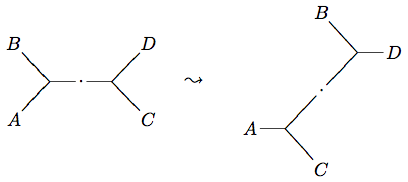

x y z \ / | s t \ / r

z | y t′ \ / x s′ \ / r

z | x t′ \ / s′ y \ / r input output ◊ mixed ass. output ◊ mixed comm.

Depicting R⊗ with a branching and R◊ with a non-branching node, in the case of the modally controlled extraction postulates discussed here, we have the constraint that for all x,y,z,r,s,t composed as in the input configuration below, there are alternative internal points s′, t′ connecting the root r to the leaves x,y,z.

For the mapping between the modally extended syntactic source calculus and the semantic target calculus LP, we have two options. The first is to treat ◊,□ purely as syntactic control devices. One then sets (◊A)′ = (□A)′ = A′, and the inference rules affecting the modalities leave no trace in the LP term associated with a derivation. The second is to actually provide denotation domains for the new types, and to extend the term language accordingly. For the minimal logic of ◊,□, one can turn to Wansing (2002), who develops a set-theoretic interpretation of minimal temporal intuitionistic logic. The temporal modalities of future possibility and past necessity are indistinguishable from the control operators ◊,□, prooftheoretically and as far as their relational interpretation is concerned. Morrill (1990) gives an interpretation in intensional lambda calculus for a stronger S5 modality, which assumes a universal accessibility relation at the level of the frame semantics. Denotations for modalized formulas A, in this setting, are functions from indices (situations, reference points) to A denotations.

Discussion. The control operators discussed here bear a strong resemblance to the syntactic control features used in Stabler's algebraic formulation of Minimalist Grammars (Stabler 1997, 1999). These features, like ◊,□, come in matching pairs of a licensor ‘+f’ and a licensee ‘−f’ that have to cancel for a derivation to be successful; movement is triggered by the presence of an appropriate licensor feature. For an explicit comparison of the mimimalist and the typelogical view on structural control, see Vermaat (2004).

Whether one wants the full expressivity of modally controlled structural rules is a matter of debate. Vermaat (2006) takes the strong position that the cross-linguistic possibilities for displacement can be fully captured in terms of the right branch extraction postulates discussed above, together with their mirror images for extraction from left branches. Under this view, which is consistent with the radical lexicalism of the original categorial systems, this postulate set is fixed at the level of Universal Grammar, and variation is reduced to the language-specific lexica that determine which of the available extraction patterns are activated.

3.2 The logic of discontinuity

The discontinuous Lambek calculi that have been developed by Morrill and co-workers (see Morrill et al. 2007, 2009) and Chapter 6 of the forthcoming monograph (Morrill 2010) extend the associative Lambek calculus L. We saw that L is the logic of strings composed by concatenation. The discontinuous calculi enrich the ontology with a notion of split strings: expressions consisting of detached parts, as in the idiom ‘take — to task’. To build the phrase ‘take someone to task’, one wraps the discontinuous expression around its object. In this particular example, there is a single point of discontinuity, but one can also think of cases with more than one split point. This naturally leads to two notions of discontinuous composition: a deterministic view, where wrapping targets a particular split point, and a non-deterministic view where the targeted split point is arbitrary. In the case of expressions with a single split point, these two notions coincide.

The vocabulary of DL (Discontinuous Lambek Calculus) consists of residuated families of unary and binary type-forming operations. A representative sample is given below. For the binary case, in addition to the concatenation product of L and the residual slash operations, we have a discontinuous (wrapping) product ⊙, with residual infixation ↓ and extraction ↑ operations. For the deterministic interpretation, the discontinuous type-forming operations have an indexed form ↑k, ⊙k, ↓k explicitly referring to the k-th split point of their interpretants. The function of the unary operations is to control the creation and removal of split points. As in the binary case, we have non-deterministic operations (bridge ∧, split ∨) and indexed forms (∧k,∨k) with a deterministic interpretation.

A,B ::= … | A ⊙ B | A ↓ B | B ↑ A | ∧A | ∨A

On the model-theoretic side, an innovative feature of DL is the move to a multi-sorted interpretation. The key notion is that of a graded algebra: a freely generated algebra (W,·,1, ⎵) where the monoid (W,·,1) of the interpretation of L* is augmented with a distinguished generator ⎵ called the separator. The sort of an expression s, σ(s), is given by the number of occurrences of the separator in it. Expressions of the nullary sort are the familiar strings for the language models of L. Expressions of sort n > 0 are split strings, with n marked positions where other expressions can be substituted.

Interpretation of types is now relativized to sorted domains Wi = {s | σ(s) = i} for i ≥ 0. Frames, accordingly, are sorted structures ({Wi}i∈N,Rbridge,Rwrap,·,{bridgek}k∈N*,{wrapk}k∈N*) with n + 1-ary relations for the n-ary type-forming operations with a non-deterministic interpretation and n-ary operations (functions) for the n-place deterministic vocabulary items. The operation · : Wi × Wj → Wi+j here is the sorted version of the concatenation operation of L.

relation/operation interpretation Rwrap ⊆ Wi+1 × Wj × Wi+j the smallest relation s.t. Rwrap(u⎵w,v,uvw) Rbridge ⊆ Wi+1 × Wi the smallest relation s.t. Rbridge(u⎵v,uv) wrapk : Wi+1 × Wj → Wi+j wrapk(s,t) is the result of replacing the k-th separator in s by t bridgek : Wi+1 → Wi bridgek(s) is the result of replacing the k-th separator in s by 1

An interpretation for DL associates atomic types of sort i to subsets of Wi. Interpretation clauses for the new complex types are standard. In the light of the illustrations below, we give the clauses for non-deterministic bridge/split, and the wrapping family. The sort of the types can be readily computed from the sort information for the interpreting operations/relations.

- s ⊩ ∧A iff ∃t(Rbridge(t,s) and t ⊩ A)

- t ⊩ ∨B iff ∀s(Rbridge(t,s) implies s ⊩ B)

- s ⊩ A ⊙ B iff ∃s′s″(Rwrap(s′,s″,s) and s′ ⊩ A and s″ ⊩ B)

- s″ ⊩ A ↓ C iff ∀s′s(if Rwrap(s′,s″,s) and s′ ⊩ A, then s ⊩ C)

- s′ ⊩ C ↑ B iff ∀s″s(if Rwrap(s′,s″,s) and s″ ⊩ B, then s ⊩ C)

On the proof-theoretic side, a cut-elimination theorem for a sequent presentation of DL establishes decidability. The DL sequent rules are shown to be sound with respect to the intended interpretation; a completeness result so far has not been obtained. For the mapping from the syntactic source calculus DL to the semantic target calculus LP, the unary type-forming operations are considered inert: the inference rules for these connectives, consequently, leave no trace in the LP proof term associated with a derivation in the syntactic source calculus. The continuous and discontinuous families, for the rest, are treated exactly alike. Specifically, the infixation and extraction operations are mapped to LP function types, like the slashes.

Illustration. DL has been successfully applied to a great number of discontinuous dependencies, both of the overt and of the covert type. The non-deterministic operations have been used to model particle shift and complement alternation constructions. The deterministic operations of sort 1 (single split point) are used in the analysis of non-peripheral extraction, discontinuous idioms, gapping and ellipsis, quantifier scope construal, reflexivisation, pied-piping and the Dutch cross- serial dependencies, among others.

We illustrate the non-deterministic use of DL with English particle shift, using labeled natural deduction format to display derivations, with items Form-Meaning:Type. A verb-particle combination ‘call — up’ can be lexically typed as ∨(np\s) ↑ np of sort 2, with an internal split point, and a right-peripheral one. Elimination of the non-deterministic extraction operation ↑ offers a choice as to whether wrapping will affect the first or the second split point. The first option is displayed below. The remaining separator is removed in the elimination step for ∨, with the continuous verb phrases ‘call Mary up’ or ‘call up Mary’ as result.

called ⋅ ⎵ ⋅ up ⋅ ⎵ −phone : ∨(np\s) ↑ np Mary − m : np E↑

called ⋅ Mary ⋅ up ⋅ ⎵ − (phone m) : ∨(np\s) E∨ called ⋅ Mary ⋅ up − (phone m) : np\s

For an example involving covert discontinuity, consider quantifier scope construal. DL provides a uniform type assignment to generalized quantifier expressions such as ‘everyone’, ‘someone’: (s ↑ np) ↓ s. In the syntactic source calculus, this type assignment allows a quantifier phrase QP to occupy any position that could be occupied by a regular non-quantificational noun phrase. Semantically, the image of the ↑ Introduction rule at the level of the semantic target calculus LP binds an np type hypothesis at the position that was occupied by the QP (the a − x : np premise, with a and x structural and semantic variables for the np hypothesis). The image of the ↓ Elimination rule applies the term representing the QP meaning to this abstract. Scope ambiguities arise from derivational ambiguity in the source calculus DL. The derivation below results in a non-local reading ‘there is a particular x such that Mary thinks x left’. Looking upward from the conclusion, the last rule applied is ↓ Elimination, which means the QP takes scope at the main clause level. An alternative derivation, producing the local scope reading, would have the / Elimination rule for ‘thinks’: (np\s)/s as the last step.

… a − x : np … ⋮ Mary ⋅ thinks ⋅ a ⋅ left − ((thinks (left x)) m) : s

↑I

↓Esomeone − ∃ : (s ↑ np) ↓ s Mary ⋅ thinks ⋅ ⎵ ⋅ left − λx.((thinks (left x)) m) : s ↑ np Mary ⋅ thinks ⋅ someone ⋅ left − (∃ λx.((thinks (left x)) m)) : s

Discussion. The basis for the DL extensions is the associative calculus L. As we saw above, a global insensitivity to phrase structure is a source of overgeneration, unless blocked by explicit island modalities. In the development of DL nothing seems to hinge on the associativity of the base system: it would seem entirely feasible, in other words, to develop DL as an extension of a non-associative basis, allowing for the representation of constituent-structure information. In the interpreting frames, one would then start from a graded groupoid rather than a monoid. The fact that the DL framework can readily accommodate a string or tree-based point of view testifies to the versatility of the approach.

3.3 Symmetric categorial grammar

The extensions of the Syntactic Calculus that we studied in the previous sections all obey an “intuitionistic” restriction: in statements A1,…,An ⊢ B the antecedent can consist of multiple formulas (configured as a ⊗ tree in the non-associative case, a list or a multiset in the case of L, LP), the succedent is a single formula.

In a remarkable paper antedating Linear Logic by five years, Grishin (1983) presents a number of extensions of the Lambek calculus with the common characteristic that derivability holds between hypotheses A1,…,An, taken together by means of a multiplicative conjunction, and multiple conclusions B1,…,Bm, combined by means of a multiplicative disjunction. Linguistic exploration of Grishin's ideas has started in recent years. In this section, we introduce a multiple-conclusion categorial system that is already well-studied: LG (for Lambek-Grishin calculus). We present syntax and relational semantics of the system; in the next section, we turn to its computational interpretation.

preorder laws A ⊢ A

A ⊢ B B ⊢ C A ⊢ C (dual) residuation laws)

A ⊢ C/B A ⊗ B ⊢ C B ⊢ A\C

B ⦸ C ⊢ A C ⊢ B ⊕ A C ⊘ A ⊢ B interaction principles

A ⊗ B ⊢ C ⊕ D C ⦸ A ⊢ D / B

A ⊗ B ⊢ C ⊕ D B ⊘ D ⊢ A \ C

A ⊗ B ⊢ C ⊕ D C ⦸ B ⊢ A \ D

A ⊗ B ⊢ C ⊕ D A ⊘ D ⊢ C / B The Lambek-Grishin calculus LG

LG consists of a symmetric version of the pure residuation logic NL together with structure-preserving interaction principles relating the conjunctive and disjunctive operations. We discuss these components in turn. For symmetry, the inventory of type-forming operations is doubled: in addition to the NL operations ⊗,\,/ (product, left and right division), there is a second family ⊕,⊘,⦸: coproduct, right and left difference. The two families are related by an arrow reversal symmetry δ, which translates type formulas according to the table below.

δ

C/B A ⊗ B A\C B ⦸ C B ⊕ A C ⊘ A

At the level of derivability, we then have A ⊢ B iff δ(B) ⊢ δ(A): for every theorem or rule of NL, we also find its image under δ in LG. A note about the notation: we read B ⦸ A as ‘B from A’ and A ⊘ B as ‘A less B’, i.e., the quantity that is subtracted is put under the circled (back)slash, just as we have the denominator under the (back)slash in the case of left and right division types. In a formulas-as-types spirit, we will feel free to refer to the division operations also as implications, and to the difference operations as coimplications.

Communication between the product and coproduct families requires the addition of interaction principles to the (dual) residuation laws. The principles above take the form of inference rules obtained from the following recipe: from A ⊗ B ⊢ C ⊕ D in the premise, one selects a product and a coproduct term; in the conclusion, one simultaneously introduces the residual operations for the remaining two terms. Using the (dual) residuation laws, one derives the following patterns from the interaction principles. Alternatively, one can take (P1)–(P4) as primitive postulates, and obtain the interaction principles as derived inference rules, using transitivity and the (dual) residuation laws.

(P1) (A ⦸ B) ⊗ C ⊢ A ⦸ (B ⊗ C) (P2) C ⊗ (A ⦸ B) ⊢ A ⦸ (C ⊗ B) (P3) C ⊗ (B ⊘ A) ⊢ (C ⊗ B) ⊘ A (P4) (B ⊘ A) ⊗ C ⊢ (B ⊗ C) ⊘ A

Derivability patterns of the form (P1)–(P4) have been called linear distributivity principles — linear, because they do not duplicate any of the terms involved. In LG, in addition to being linear, they respect the word order and phrase structure information encoded in the non-commutative, non-associative type-forming operations.

Illustration. The interaction principles of LG provide a particularly direct way of capturing phenomena that depend on infixation rather than concatenation. The derivations below (using monotonicity, and (dual) residuation steps) show how the same pieces of information (the same premises) can be used either to introduce an implication B\C on the left of the turnstile, or a coimplication B ⊘ C on the right. The first option leads to a rule of Application — the central composition operation of standard Lambek calculus. The second option leads to a Co-Application variant. Although these two rules are derived from the same premises, there is an important difference between them. When the implication B\C composes with its argument, it must stay external to X. In the case of the coimplication, when X is some product of factors A1,…,An, the conditions for the interaction principles are met. This means that the coimplication B ⊘ C will be able to descend into the phrase X and associate with any of the constituent parts Ai into a formula (B ⊘ C) ⦸ Ai.

X ⊢ B C ⊢ Y B\C ⊢ X\Y X ⊗ (B\C) ⊢ Y

X ⊢ B C ⊢ Y X ⊘ Y ⊢ B ⊘ C X ⊢ (B ⊘ C) ⊕ Y

In general, an expression of type (B ⊘ C) ⦸ A behaves locally as an A within a context of type B; it then acts as a function transforming B into C. We illustrate with the example of non-local scope construal for which we have seen the DL analysis in the previous section. The key point is the lexical type assignment to the generalized quantifier expression, instantiating (B ⊘ C) ⦸ A as (s ⊘ s) ⦸ np. The semantic interpretation of this derivation will be discussed below.

np ⊗ (((np\s)/s) ⊗ (np ⊗ (np\s))) ⊢ s s ⊢ s np ⊗ (((np\s)/s) ⊗ ( np ⊗ (np\s))) ⊢ (s ⊘ s) ⊕ s ⋮ np ⊗ (((np\s)/s) ⊗ (( (s ⊘ s) ⦸ np ) ⊗ (np\s)) ) ⊢ s Alice thinks someone left

Completeness, decidability. Relational models for LG are given in terms of two interpreting relations: R⊗ for multiplicative conjunction (merge, fusion), and R⊕ for multiplicative disjunction (fission). The truth conditions for co-product and the difference operations are given below.

- x ⊩ A ⊕ B iff ∀yz(if R⊕xyz, then either y ⊩ A or z ⊩ B)

- y ⊩ C ⊘ B iff ∃xz(R⊕xyz and not-(z ⊩ B) and x ⊩ C)

- z ⊩ A ⦸ C iff ∃xy(R⊕xyz and not-(y ⊩ A) and x ⊩ C)

Completeness of LG with respect to this interpretation is proved in Kurtonina and Moortgat 2010. The minimal symmetric system (without interaction principles) has fission R⊕ and merge R⊗ as distinct relations, without imposing restrictions on their interpretation. In the presense of the interaction principles, their interpretation is related in terms of frame constraints. The distributivity principle (A ⦸ B) ⊗ C ⊢ A ⦸ (B ⊗ C), for example, corresponds to the constraint that for every x,y,z,w,v, if we have a configuration R⊗xyz and R⊕vwy, then there exists an alternative internal point t such that R⊕twx and R⊗tvz. For decidability, Moortgat (2009) gives a sequent presentation of LG in the format of a Display Calculus allowing cut-free proof search.

Discussion. LG is a weaker logic than CNL, the Classical Non-Associative Lambek Calculus of de Groote and Lamarche 2002. In the latter system, like in classical linear logic, we have an involutive negation and De Morgan dualities turning multiplicative conjunction (times) and disjunction (par) into interexpressible operations. With respect to the linguistic applications, one can compare LG with the Discontinuous Calculus of §3.2. Whereas the latter provides uniform analyses of both the extraction and the infixation type of discontinuities, the distributivity principles of LG are primarily addressing the infixation variety. It may be interesting in this respect to note that Grishin 1983 proposes a second group of distributivity principles — converses of the ones discussed above. Whether these could play a role in the analysis of overt displacement remains to be seen. From a formal point of view, each of the groups of distributivities is a conservative extension of the basic symmetric calculus. But the combination of the two (i.e., the distributivity principles as invertible rules) induces partial associativity/commutativity of the (co)product operations, i.e., structure-preservation is lost.

3.4 Flexible interpretation, continuation semantics

In the previous sections, the emphasis was on extending the capabilities of the syntactic source calculi. In this section, we look at developments that put more structure in the mapping between the source and target calculi.

The syntax-semantics mapping, as discussed in §2.2, is rigid: once we have determined its action on the atomic types of the syntactic source calculus, everything is fixed. This rigidity is problematic as soon as we have a class of syntactic expressions with a non-uniform contribution to meaning assembly. Noun phrases are a case in point. Mapping np to semantic type e is appropriate for proper nouns and definite descriptions whose denotations can be taken to be individuals. But for quantifying expressions like ‘someone’, ‘no student’, an interpretation of type e is inadequate. The rigid syntax-semantics mapping thus forces one to assign these expressions a higher-order type in the syntax, for example s/(np\s), so as to obtain a semantic type with the right kind of denotation as the translation image, (e → t) → t. If one wants to avoid this semantically motivated complication of the syntax, one could set np′ = (e → t) → t. But now the effect will be that simple transitive verbs (np\s)/np, which one would like to treat as binary relations e → e → t by default, are associated with third-order interpretations instead.

Flexible interpretation. An attractive alternative to this rigid view of the syntax-semantics interface, is the flexible interpretation of Hendriks 1993. In this approach, a type of the syntactic source calculus is associated with an infinite set of LP types: one of these is the default semantic type associated with the syntactic source type, the others are derived from this default by means of type shifting laws. Similarly, a source calculus term is translated into an infinite set of target semantic terms; one of these is the basic translation (not necessarily of the default semantic type for the relevant syntactic category), the others are derived by the Curry-Howard term image of the typing shifting laws. The type shifting principles used are (i) argument lowering, (ii) value raising, and (iii) argument raising. The first two correspond to valid type transitions of NL; argument raising is the crucial principle that goes beyond the expressivity of the syntactic source calculi, as we saw in §2.2.

As an example of flexible interpretation, compare ‘John loves Mary’ and ‘Everyone loves someone’. Syntactically, all noun phrases are typed as np, and the verb as (np\s)/np. ‘John’ and ‘Mary’ are interpreted with constants of type e, ‘loves’ with a constant of type e → e → t, the default semantic types for the syntactic source types. But ‘everyone’ and ‘someone’ are mapped to constants of type (e → t) → t. Interpreting the syntactic derivation for ‘Everyone loves someone’, the semantic types no longer match for simple function application: the verb expects two e type arguments, but instead finds two arguments of type (e → t) → t. There are two ways of accommodating these higher-order arguments by means of argument raising: depending on whether one raises first the object argument and then the subject, or vice versa, one obtains two readings for one and the same syntactic derivation: a reading corresponding to the surface order, with ∀ taking scope over ∃, and a reading with the inverted scope order. The correspondence between syntax and semantics, under this flexible view, has become a relation, rather than being functionally determined by the translation homomorphism of §2.2.

Continuations. It has become clear in recent years, that the core ideas of Hendriks' type-shifting account of derivational ambiguity can be insightfully recast in terms of a continuation-passing-style interpretation (CPS), as developed within computer science, and the different evaluation strategies available for such interpretation. The use of continuations in natural language semantics has been pioneered by de Groote (2001b) and Barker (2002). In the theory of programming languages, a continuation is a representation of the control state, i.e., the future of the computation to be performed. By adding the control state as an explicit parameter in the interpretation, it becomes possible for a program to manipulate its continuation, and thus to express control constructs that would otherwise be unavailable. Technically, continuation semantics makes use of a designated response type for the ultimate result of a computation; a continuation for an expression of type A is a function that takes an A value to the response type. In the application to natural language semantics, the response type is generally identified with the type of truth values t, i.e., the type assigned to complete sentences.

Barker 2004 shows how to obtain the type-shifting scope ambiguity for ‘Everyone loves someone’ in terms of a continuation-passing-style translation. The key idea is that in mapping the source calculus to the semantic target calculus, all types are parameterized with an extra continuation argument. We use a new translation function (·)* for this, which composes the mapping from syntactic to semantic types with the continuization. A source language type A is now associated with a so-called computation in the target language, i.e., a function acting on its own continuation: A* = (A′ → t) → t.

At the level of proofs, given the above interpretation of types, the task is to find an LP proof for the sequent below, the image of the \ and / elimination rules for A, A\B ⊢ B and B/A, A ⊢ B. Whereas in the source calculus there is only one way of putting together an A\B (or B/A) function with its A argument, in the target calculus there is a choice as to the evaluation order: do we want to first evaluate the translation image of the argument, then that of the function, or the other way around. We write ·v for the first option (call-by-value) and ·n for the second (call-by-name). In the target language, m and n are variables of type A′ → B′ and A′ respectively; k is the resulting B′ → t continuation.

- LP translation of Application:

(A′ → t) → t, ((A′ → B′) → t) → t ⊢ (B′ → t) → t - call-by-value solution:

(M ⊲ N)v = (N ⊳ M)v = λk.(Nv λn.(Mv λm.(k (m n)))) - call-by-name solution:

(N ⊳ M)n = (M ⊲ N)n = λk.(Mn λm.(Nn λn.(k (m n))))

The continuation-passing-style interpretation, like the type-shifting approach, makes it possible to assign syntactic type np both to proper names and to quantificational noun phrases: in the target calculus, the translation of np has the appropriate semantic type (e → t) → t. But the lifting strategy is now generalized to all source types: a transitive verb (np\s)/np) is mapped to ((e → e → t) → t) → t, etc. For the translation of lexical constants of type A, the default recipe is λk.(k c), c a non-logical constant of type A′. The default translation simply passes the semantic value for these lexical items to the continuation parameter k. But quantificational noun phrases effectively exploit the continuation parameter: they take scope over their continuation, leading to lexical recipes λk.(∀ λx.(k x)), λk.(∃ λx.(k x)) for ‘everyone’ and ‘someone’. The choice between the evaluation strategies, in combination with these lexical recipes, then results in different interpretations for a single derivation in the source calculus M = (everyone⊳(loves⊲someone)), with ·v producing the surface scope construal, and ·n the inverted scope reading.

Mv = λk.(∀ λx.(∃ λy.(k ((loves y) x))) Mn = λk.(∃ λy.(∀ λx.(k ((loves y) x)))

The above example only uses the slash Elimination of NL in the syntactic source calculus. An important motivation for the introduction of continuations is that they make it possible to give a constructive interpretation to classical (as opposed to intuitionistic) logic; see Sørensen and Urzyczyn 2006 for discussion. It will be no surprise, then, that also the multiple-conclusion symmetric categorial grammar LG has a natural interpretation in the continuation-passing-style (Bernardi and Moortgat 2010, Moortgat 2009). In the example we gave above, source types A are provided with a single continuation parameter. In the CPS translation for LG types, the continuization of syntactic source types is performed recursively. Let us write V(A) for target language values of type A, K(A) for continuations, i.e., functions V(A) → R, and C(A) for computations, i.e., functions K(A) → R, where R is the response type. For an LG syntactic source type A, the call-by-value CPS translation yields an LP value V(A) as follows. V(p) = p for atomic types,

- implications: V(A\B) = V(B/A) = K(B) → K(A)

- coimplications (dual to implications): V(A ⊘ B) = V(B ⦸ A) = K(A\B)

At the level of proofs (and the terms in Curry-Howard correspondence with them), the CPS translation turns the multiple-conclusion source derivations into single-conclusion LP derivations. The translation respects the invariant below. An active output formula A (marked off by the vertical bar in the box below) maps to a computation C(A), an active input formula to a continuation K(A). A cut then is interpreted as the application of C(A) to K(A).

source: LG/,\,⊘,⦸ →CPS target: LP→ X ⊢ A | Y V(X),K(Y) ⊢ C(A) X | A ⊢ Y V(X),K(Y) ⊢ K(A) X ⊢ Y V(X),K(Y) ⊢ R

Discussion. Continuation-based approaches are now available for a number of recalcitrant phenomena that would seem to defy a compositional treatment. Examples, at the sentential level, include the treatment of in situ scope construal and wh questions of Shan and Barker 2006, where crossover and superiority violations are explained in terms of a preference of the human processor for a left-to-right evaluation strategy; donkey anaphora (Barker and Shan 2008)); quantifier scope ambiguities for the call-by-value and call-by-name interpretation of LG are investigated in Bernardi and Moortgat 2010. At the discourse level, de Groote (2006) gives a type-theoretic analysis of dynamic phenomena, modeling propositions as functions over a sentence's left and right context (continuation).

4. Proof nets and processing

In the previous sections, we have seen how the different categorial calculi can be presented as proof systems with a sequent-based decision procedure. Naive proof search in these systems, however, is inefficient from a computational point of view: in general, there will be multiple ways to construct alternative proofs that differ only in the order of the application of the inference rules, but produce the same interpretation (the LP term associated with a derivation under the interpretation homomorphism). This issue of ‘spurious’ ambiguity can be tackled by the introduction of normal form derivations (as in Hepple 1990, Hendriks 1993), compare focused proof search regimes in linear logic), combined with the use of chart-based parsing methods, as in Hepple 1999, Capelletti 2007. An alternative for categorial ‘parsing-as-deduction’ is to leave the sequent calculus for what it is, and to switch to a proof net approach. Proof nets, originally developed in the context of linear logic, use a representation of derivations that is inherently free of ‘spurious ambiguity’, i.e., the issue of irrelevant rule orderings simply doesn't arise. For a general treatment of proof nets, we refer to the entry on linear Logic. Below we comment on aspects that are specifically addressing issues in categorial grammar.

Proof nets for the Lambek calculi. Proof nets for the associative calculus L and the commutative variant LP have been studied initially by Roorda (1991, 1992). To capture the ‘intuitionistic’ nature of these systems, one works with formulas with polarities: input (‘given’) polarity for antecedent occurrences of a formula, versus output (‘to prove’) polarity for succedent occurrences. Correctness criteria identify proof nets among a wider class of graphs: to the criteria of acyclicity and connectedness, applicable for linear logic in general, one adds planarity to capture word order sensitivity: in the proof nets for L, axioms links cannot cross. With respect to the syntax-semantics interface, de Groote and Retoré (1996) show that the lambda terms in Curry-Howard correspondence with LP derivations in the semantic target calculus can be read off from a proof net by specifying a set of ‘travel instructions’ for traversing a net; these instructions then correspond step-by-step with the construction of the associated lambda term.

Incremental processing. Proof nets, considered statically as graphs satisfying certain correctness criteria, remove spurious choices relating to the order of rule applications in sequent calculi: in this respect, they represent a purely ‘declarative’ view on categorial deductions. Johnson (1998) and Morrill (2000) have pointed out that an alternative, ‘procedural’ view on the actual process of constructing a net makes perfect sense as well, and offers an attractive perspective on performance phenomena. Under this interpretation, a net is built in a left-to-right incremental fashion by establishing possible linkings between the input/output literals of the partial proof nets associated with lexical items as they occur in real time. This suggests a simple complexity measure on an incremental traversal, given by the number of unresolved dependencies between literals. This complexity measure correlates nicely with a number of well-attested processing issues, such as the difficulty of center embedding, garden path effects, attachment preferences, and preferred scope construals in ambiguous constructions.

First-order quantifiers. The planarity condition singles out the non-commutative proof nets for L among the LP nets. To deal with the more structured categorial calculi discussed here, the correctness criteria have to be refined. One strategy of doing this is via a translation into MILL1 (first-order multiplicative intuitionistic linear logic) where one has proof nets with extra links for existential and universal quantification over first order variables. One can then use these variables in a way very similar to the use of position arguments in Definite Clause Grammars as used in logic programming. Moot and Piazza (2001) work out such translations for L and NL. For the concatenation operations of L, one replaces the proposition letters (atomic formulas) by two-place terms, marking beginning and end of a continuous string. For non-associative NL, one adds an extra variable to keep track of the nesting depth of subformulas. For wrapping operations in the simple discontinuous calculus DL (allowing a single split point), Morrill and Fadda (2008) use four-place predicates. In general, we find a correlation here between the syntactic expressivity of the calculi and the number of variables needed to encode their structural resource management.

Nets and rewriting. The multimodal and symmetric calculi of §3.1 and §3.3 pose a challenge to the proof net methods as originally developed for linear logic. In these systems we typically find one-way structural rules, such as the extraction postulates for overt displacement, or the Grishin distributivity laws in the case of LG: these one-way rules naturally suggest a notion of graph rewriting. A completely general proof net framework for the extended Lambek calculi, with a correctness criterion based on rewriting, has been developed in Moot and Puite 2002 and Moot 2007.

The basic building block for the Moot-Puite nets is a generalized notion of a link, accommodating connectives of any arity. A link is determined by its type (tensor or cotensor), its premises (a sequence P1,…,Pn, 0 ≤ n), its conclusions (a sequence C1,…,Cm, 0 ≤ m), and its main formula (which can be empty, in the case of a neutral link, or one of the Pi or Ci). A proof structure is a set of links over a finite set of formulas such that every formula is at most once the premise of a link and at most once the conclusion. Formulas which are not the conclusion of any link are the hypotheses of the proof structures, whereas the formulas which are not the premise of any link are the conclusions. An axiom formula is a formula which is not the main formula of any link. Abstract proof structures are obtained by erasing all formulas on the internal nodes. A proof net is a proof structure for which the abstract proof structure converts to a tensor tree — a rooted tree in the case of the intuitionistic systems, possibly an unrooted tree in the case of symmetric LG. Proofs nets, so defined, can then be show to be the graphs that correspond to valid derivations.

The rewriting steps transforming a candidate abstract proof structure into a proof net are of two kinds. The logical contractions correspond to identities A ⊢ A, for complex formulas A; they reduce a configuration of a matching tensor and cotensor link to a point. The structural conversions perform an internal rewiring of a proof structure with hypotheses H1,…,Hn and conclusions C1,…,Cm to a structure with some permutation of the Hi as hypotheses and some permutation of the Ci as conclusions. Copying and deletion, in other words, are ruled out. As an example, the rewriting corresponding to one of the Grishin interaction principles discussed in §3.3, allowing us to infer C ⦸ A ⊢ D/B from A ⊗ B ⊢ C ⊕ D. Hypotheses A, B are on the left, conclusions C, D on the right. The net representation brings out in a particularly clear way that these principles are structure-preserving: they leave the order of hypotheses and conclusions untouched.

5. Recognizing capacity, complexity

Reflecting on the above, one can say that the extensions of the syntactic calculus reviewed here are motivated by the desire to find a proper balance between expressivity and computational tractability: on the side of expressivity, an adequate typelogical framework should be able to recognize patterns beyond the strictly context-free; ideally, one would like to keep such expressivity compatible with a polynomial derivability problem. Among contemporary ‘lexicalized’ grammar formalisms, there is a remarkable convergence on systems that meet these requirements: the so-called ‘mildly context-sensitive’ systems (Joshi, Vijay-Shanker, and Weir 1991). Where can we situate the typelogical systems discussed here with respect to recognizing capacity and complexity?

The minimal system in the typelogical hierarchy NL has a polynomial recognition problem (see de Groote 1999, and Capelletti 2007 for actual parsing algorithms), but it is strictly context-free (Kandulski 1988). Extensions with global structural rules are unsatisfactory, both on the expressivity and on the complexity front. As for L, Pentus (1993b, 2006) shows that it remains strictly context-free, whereas the addition of global associativity makes the derivability problem NP complete. NP completeness already holds for the product-free fragment of L (Savateev 2009). Also for LP, i.e., multiplicative intuitionistic linear logic, we have NP completeness (Kanovich 1994). With regard to recognizing capacity, van Benthem (1995) shows that LP recognizes all permutation closures of context-free languages: a class which is too wide from the syntactic point of view. As the logic of meaning assembly, LP is a core component of the typelogical inventory. But as we saw in the discussion of the syntax-semantics interface, we can restrict attention to the sublanguage of LP that forms the image of derivations in syntactic calculi making interesting claims about word order and phrase structure.

The situation of the multimodal and symmetric extensions is more intricate. Expressivity here is directly related to the kind of restrictions one imposes on structural resource management. At one end of the spectrum, multimodality without structural rules does not lead us beyond context-free recognition: Jäger (2003) shows that the pure residuation logic for n-ary families of type-forming operations stays strictly context-free. If one requires structural rules to be resource-sensitive (no copying or deletion) and, for the unary modalities, non-expanding, one obtains the full expressivity of context-sensitive grammars, and the PSPACE complexity that goes with it (Moot 2002). (PSPACE consists of those problems solvable using some polynomial amount of memory space. See the entry on computability and complexity. If one imposes no restrictions on structural rules (specifically, if one allows copying and deletion operations), unsurprisingly, one obtains the expressivity of unrestricted rewriting systems (Carpenter 1999). A controlled use of copying is used in the analysis of anaphora resolution in Jäger 2005.