Combinatory Logic

Combinatory logic (henceforth: CL) is an elegant and powerful logical theory that is connected to many areas of logic, and has found applications in other disciplines, especially, in computer science and mathematics.

CL was originally invented as a continuation of the reduction of the set of logical constants to a singleton set in classical first-order logic (FOL). CL untangles the problem of substitution, because formulas can be prepared for the elimination of bound variables by inserting combinators. Philosophically speaking, an expression that has no bound variables represents the logical form of the original formula. Sometimes, bound variables are thought to signify “ontological commitments.” Another philosophical rôle of CL is to show the variability of the ontological assumptions a theory has.

Substitution is a crucial operation not only in first-order logics, but also in higher-order logics, as well as in other formal systems that contain a variable binding operator, such as the \(\lambda\)-calculuses and the \(\varepsilon\)-calculus. Indeed, carrying out substitution correctly is particularly pressing in \(\lambda\)-calculuses and in the closely related functional programming languages. CL can emulate \(\lambda\)-abstraction despite the fact that CL has no variable binding operators. This makes CL a suitable target language for functional programming languages to be compiled into.

The connection to \(\lambda\)-calculuses might suggest—correctly—that CL is sufficiently expressive to formalize recursive functions (i.e., computable functions) and arithmetic. Consequently, CL is susceptible to Gödel-type incompleteness theorems.

CL is an archetypical term rewriting system (TRS). These systems comprise a wide range of formal calculuses from syntactic specifications of programming languages and context-free grammars to Markov algorithms; even some number theoretic problems may be viewed as special instances of questions about TRSs. Several notions and proof techniques that were originally invented for CL, later turned out to be useful in applications to less well-understood TRSs.

CL is connected to nonclassical logics via typing. First, a correspondence between formulas that are provable in the implicational fragment of intuitionistic logic and the typable combinatory terms was discovered. Then the isomorphism was generalized to other combinatory bases and implicational logics (such as the logic of relevant implication, exponential-free linear logic, affine logic, etc.).

Self-reference factors into some paradoxes, such as the widely known liar paradox and Russell’s paradox. The set theoretical understanding of functions also discourages the idea of self-application. Thus it is remarkable that pure untyped CL does not exclude the self-application of functions. Moreover, its mathematical models showed that a theory in which functions can become their own arguments is completely sensible, in addition to being consistent (what was established earlier using proof theoretic methods).

- 1. Schönfinkel’s elimination of bound variables

- 2. Combinatory terms and their main properties

- 3. Nonclassical logics and typed CL

- 4. Models

- 5. Computable functions and arithmetic

- Bibliography

- Academic Tools

- Other Internet Resources

- Related Entries

1. Schönfinkel’s elimination of bound variables

1.1 The problem of substitution

Classical first-order logic includes quantifiers that are denoted by \(\forall\) (“for all”) and \(\exists\) (“there is a”). A simple sentence such as “All birds are animals” may be formalized as \(\forall x(Bx\supset Ax)\), where \(x\) is a variable, \(B\) and \(A\) are one-place predicates, and \(\supset\) is a symbol for (material) implication. The occurrences of the variables in the closed formula \(\forall x(Bx\supset Ax)\) are bound, whereas those in the open formula \(Bx\supset Ax\) are free. If we assume that \(t\) (for “Tweety”) is a name constant, then an instance of the above sentence is \(Bt\supset At\), that may be read as “Tweety is an animal, provided Tweety is a bird.” This illustrates that the instantiation of a (universal) quantifier involves substitution.

Due to the simplicity of the example, the substitution of \(t\) for \(x\) in \(Bx\) and in \(Ax\) seems to be easy to understand and to perform. However, a definition of substitution for FOL (and in general, for an abstract syntax, that is, for a language with a variable binding operator) has to guarantee that no free occurrence of a variable in the substituted expression becomes bound in the resulting expression.

To see what can go wrong, let us consider the (open) formula \(\forall x(Rxy\land Rxr)\), where \(R\) is a two-place predicate, \(r\) is a name constant abbreviating “Bertrand Russell” and \(\land\) is conjunction. \(\forall x(Rxy\land Rxr)\) contains a free occurrence of \(y\) (that is, \(y\) is a free variable of the formula), however, \(y\) is not free for a substitution of a term that contains a free occurrence of \(x\), for instance, \(x\) itself. More formally, the occurrence of \(y\) in the second argument place of \(R\) in \(\forall x(Rxy\land Rxr)\) is not bound by a quantifier (the only quantifier) of the formula, whereas \(\forall x(Rxx\land Rxr)\) is a closed formula, that is, it contains no free occurrences of variables. Informally, the following natural language sentences could be thought of as interpretations of the previous formulas. “Everybody reads him and Russell,” (where ‘him’ is deictic, or perhaps, anaphoric) and “Everybody reads himself and Russell.” Obviously, the meanings of the two sentences are vastly different, even if we assume that everybody pens something. As a contrast, \(\forall x(Rxw\land Rxr)\) exhibits an unproblematic substitution of the name constant \(w\) for the free occurrence of \(y\). (The latter formula, perhaps, formalizes the sentence “Everybody reads Ludwig Wittgenstein and Bertrand Russell.”) These examples are meant to demonstrate the more complex part of the problem Moses Schönfinkel set out to solve, and for what he invented CL.[1]

1.2 The operators “nextand” and “\(U\)”

A well-known result about classical sentential logic (SL) is that all truth-functions can be expressed in terms of \(\lnot\) and \(\land\) (or of \(\lnot\) and \(\lor\), etc.). A minimal sufficient set of connectives, however, can contain just one connective such as \(\mid\) (“nand,” which is often called, Sheffer’s stroke), or \(\downarrow\) (“nor,” which is Peirce’s joint denial). “Nand” is “not-and,” in other words, \(A\mid B\) is defined as \(\lnot(A\land B)\), where \(A\), \(B\) range over formulas and \(\lnot\) is negation. Going into the other direction, if \(\mid\) is a primitive, then \(\lnot A\) is definable as \(A\mid A\), and \(A\land B\) is \((A\mid B)\mid(A\mid B)\). Although formulas with numerous vertical lines may quickly become visually confusing and hard to parse, it is straightforward to prove that \(\mid\) alone is sufficiently expressive to define all the truth-functions.

Schönfinkel’s aim was to minimize the number of logical constants that are required for a formalization of FOL, just as Henry M. Sheffer (indeed, already Charles S. Peirce) did for classical propositional logic. One of the two quantifiers mentioned above suffices and the other may be assumed to be defined. Let us say, \(\exists x A\) is an abbreviation for \(\lnot\forall x\lnot A\). Even if \(\lnot\) and the rest of the connectives are traded in for \(\mid\), two logical constants remain: \(\forall\) and \(\mid\). A further pressing issue is that quantifiers may be nested (i.e., the scope of a quantifier may fully contain the scope of another quantifier), and the variable bindings (that could be visualized by drawing lines between quantifiers and the variables they bind) may get quite intertwined. Keeping for a moment the familiar logical constants, we may look at the following formula that hints at the emerging difficulties—when the question to be tackled is considered in its full generality.[2]

\[ \forall x(\exists y(Py\land Bxy)\supset\exists y(Py\land Bxy\land \forall z((Rz\land Ozy)\supset \lnot Cz))) \]\(\forall x\) binds all occurrences of \(x\); the variables in the second argument place of the two \(B\)s are bound by one of the two \(\exists y\)s, the latter of which interacts with \(\forall z\) via \(Ozy\).

Predicates have a fixed finite arity in FOL, and nothing precludes binding at once a variable in the first argument of one predicate and in the second argument of another predicate. (Indeed, FOL would lose some of its expressiveness, if bindings of this sort would be excluded without some means to compensate for them.) These difficulties persist when a formula is transformed into a(n equivalent) formula in prenex normal form. As long as the variable bindings can interweave and braid into arbitrarily complex patterns, there seems to be no way to eliminate bound variables. (Note that free variables in open formulas—in a sense—behave like local name constants, and their elimination is neither intended, nor achieved in the procedures described here.)

Schönfinkel’s ingenuity was that he introduced combinators to untangle variable bindings. The combinators \(\textsf{S}\), \(\textsf{K}\), \(\textsf{I}\), \(\textsf{B}\) and \(\textsf{C}\) (in contemporary notation) are his, and he established that \(\textsf{S}\) and \(\textsf{K}\) suffice to define all the other combinators. In effect, he also defined an algorithm to carry out the elimination of bound variables, which is essentially one of the algorithms used nowadays to define bracket abstraction in CL.[3]

Schönfinkel introduced a new logical constant \(U\), that expresses the disjointness of two classes. For instance, \(UPQ\) may be written in usual FOL notation as \(\lnot\exists x(Px\land Qx)\), when \(P\) and \(Q\) are one-place predicates. (The formula may be thought to formalize, for instance, the natural language sentence “No parrots are quiet.”) In the process of the elimination of the bound variables, \(UXY\) is obtained from an expression that contains ‘\(Xx\)’ and ‘\(Yx\)’, where \(x\) does not occur in \(X\) or \(Y\). For example, if \(X\) and \(Y\) happen to be \(n\)-ary predicates with \(n\ge2\), then \(x\) occurs only in their last argument places. “Nobody reads Aristotle and Plato” can be formalized as \(\lnot\exists x(Rxa\land Rxp)\), where \(a\) and \(p\) are name constants that stand for “Aristotle” and “Plato,” respectively. This formula cannot be written as \(U(Ra)(Rp)\). On the other hand, “There is nobody whom both Russell and Wittgenstein read,” that is, \(\lnot\exists x(Rrx\land Rwx)\) turns into \(U(Rr)(Rw)\), where the parentheses delineate the arguments of \(U\). Often, the expressions \(X\) and \(Y\) (in \(UXY\)) consist of predicates (and name constants) together with combinators and other \(U\)s.

It is useful to have a notation for “nextand” (i.e., “not-exists-and”) without assuming either that \(x\) has a free occurrence in the expressions joined, or that if it has one, then it is the last component of the expressions. Following Schönfinkel, we use \(\mid^x\) for the “nextand” operator that binds \(x\). (The notation \(\mid^-\), where \(^-\) is the place for a variable, closely resembles the Sheffer stroke.) Schönfinkel achieved his goal of the reduction of the set of logical constants for FOL to a singleton set \(\{\mid^-\}\), because every formula of FOL is equivalent to a formula that contains only “nextand.”

A formula \(\forall x A\) is usually defined to be well-formed in FOL even if \(A\) has no free occurrences of \(x\). Then, of course, \(\forall x A\) is equivalent to \(A\) as well as to \(\exists x A\), and such quantifiers are called vacuous. In order to show that any formula can be rewritten into an equivalent formula that contains only “nextand,” it is sufficient to inspect the following definitions for \(\lnot\), \(\lor\) and \(\forall\) (with suitable variables)—that are due to Schönfinkel.

\[\begin{align*} \lnot A & \Leftrightarrow_\textrm{df} A\mid^x A \\ A\lor B & \Leftrightarrow_\textrm{df} (A\mid^yA)\mid^x(B\mid^yB) \\ \forall xAx &\Leftrightarrow_\textrm{df} (Ax\mid^yAx)\mid^x(Ax\mid^yAx) \end{align*}\]The definition for \(\lnot\), for instance, may be justified by the following equivalences. \(A\Leftrightarrow A\land A\), \(A\land A\Leftrightarrow \exists x(A\land A)\) (assuming that \(x\) is not free in \(A\)), hence by replacement, \(\lnot A\Leftrightarrow \lnot\exists x(A\land A)\).

Now we give a concrete example to illustrate how to turn a formula of FOL into one that contains only \(\mid^-\), and then how to eliminate the bound variables using \(U\) and combinators. To put some excitement into the process, we start with the sentence in (#1).

- (#1)

- For every natural number there is a greater prime.

A straightforward formalization of this sentence—on the foreground of the domain of numbers—is the formula in (#2), (where ‘\(Nx\)’ stands for “\(x\) is a natural number,” ‘\(Px\)’ stands for “\(x\) is a prime” and ‘\(Gxy\)’ is to be read as “\(x\) is greater that \(y\)”).

- (#2)

- \(\forall y\exists x(Ny\supset(Px\land Gxy))\)

This formula is equivalent to \(\forall y(Ny\supset\exists x(Px\land Gxy))\) and further to \(\lnot\exists y\lnot(Ny\supset\exists x(Px\land Gxy))\). In one or two more steps, we get \(Ny\mid^y(Px\mid^xGxy)\). (Expressions are considered to be grouped to the left unless parentheses indicate otherwise. E.g., \(Gxy\) is \(((Gx)y)\) not \(G(xy)\) as could have been, perhaps, expected based on the most common way of arranging parentheses in FOL formulas.) Unfortunately, neither \(\mid^x\) nor \(\mid^y\) can be replaced by \(U\) in the last expression. However, if the arguments of \(G\) were permuted then the former reduction could be carried out. One of the combinators, \(\textsf{C}\) does exactly what is needed: \(Gxy\) can be changed to \(\textsf{C}Gyx\) (see the definition of combinators in section 2.1). That is, we have \(Ny\mid^y(Px\mid^x\textsf{C}Gyx)\), and then \(Ny\mid^yUP (\textsf{C}Gy)\).[4] The expression may give the impression that \(y\) is the last component of \(UP(\textsf{C}Gy)\), which is the second argument of \(\mid^y\), but it is not so. The grouping within expressions cannot be disregarded, and another combinator, \(\textsf{B}\) is needed to turn \(UP(\textsf{C}Gy)\) into the desired form \(\textsf{B}(UP)(\textsf{C}G)y\). From \(Ny\mid^y\textsf{B}(UP)(\textsf{C}G)y\), we get \(UN(\textsf{B}(UP) (\textsf{C}G))\) in one more step. This expression is completely free of variables, and it also makes the renaming of bound variables in FOL easily comprehensible: given two sequences of (distinct) variables that are different in their first two elements, the reversal of the above process yields formulas that are (logically equivalent) alphabetic variants of the formula in (#2).

The expression \(UN(\textsf{B}(UP)(\textsf{C}G))\) may look “unfamiliar” when compared to formulas of FOL, but notation—to a large extent—is a matter of convention. It may be interesting to note that the first \(U\) is simply followed by its two arguments, however, the second \(U\) is not. \(\textsf{B}(UP)\) is a subexpression, but \(UP(\textsf{C}G)\) is not a subexpressions of \(UN (\textsf{B}(UP)(\textsf{C}G))\). Furthermore, the whole expression can be transformed into \(XNPG\) using combinators, where \(X\) is composed of \(U\)s and combinators only. Such an \(X\) concisely encodes the logical form or logical content of the formula with the predicates being arguments.[5]

The expressions obtained via the transformations outlined above quickly become lengthy—as trying to rewrite a simple FOL sentence such as \(\exists x(Px\land Qx)\) can show.[6] However, this does not diminish the importance of Schönfinkel’s theoretical results. A slight increase (if any) in the length of the expressions is not even an inconvenience, let alone an impediment in the era of computers with petabytes (or even exa- and zettabytes) of memory.

It seems unfortunate that Schönfinkel’s reduction procedure for FOL is not widely known, despite the recent publication of Wolfram (2020). This book was written to celebrate the 100-year anniversary of Schönfinkel’s lecture (in which he introduced combinators) with computer-aided explorations of the realm of combinators and with documents filling in gaps in the historical context. As a measure of how widely Sheffer’s and Schönfinkel’s reductions are known, we appeal to the fact that the first is part of standard intro courses in logic, whereas the second is not. Undoubtedly, one of the reasons for this is that Schönfinkel’s process to eliminate bound variables is conceptually more opulent than defining a few truth functions from \(\mid\) (or \(\downarrow\)). Another reason may be that Schönfinkel, perhaps, did not place a sufficiently strong emphasis on the intermediate step that allows the elimination of all other logical connectives and quantifiers via “nextand.” The importance of this step was also overlooked in the introduction to the English translation of Schönfinkel’s paper, which was written more than 30 years after the original publication. We may also note that although “nextand” is an operator in the standard logical sense, it is binary—unlike \(\forall\) and \(\exists\), which are unary.

If \(A\mid B \Leftrightarrow_\textrm{df} \lnot(A\land B)\) is added as a definition to SL, then the result is a conservative extension, and it becomes provable that for any formula \(A(p_0,\ldots,p_n)\) (i.e., for a formula containing the displayed propositional variables and some connectives) there is a formula \(B(p_0,\ldots,p_n)\) containing only the connective \(\mid\), and \(B(p_0,\ldots,p_n)\Leftrightarrow A(p_0,\ldots,p_n)\) itself is provable. \(\mid\) is, of course, interpreted as the “nand” truth function. “Nand” as a binary connective or as a binary truth function is of the same sort of object as conjunction, disjunction, etc.

The first stage in Schönfinkel’s extension of FOL is analogous. The addition of \(\mid^-\) is (also) a conservative extension of FOL, and every occurrence of \(\mid^-\) can be eliminated. (We noted that \(\mid^-\) is a binary operator, and so it may be thought to combine a quantifier (\(\exists\)) with connectives (\(\lnot\), \(\land\)), but \(\mid^-\) of course, does not introduce any objects that are not definable in FOL.)

The second stage in Schönfinkel’s extension of FOL is slightly different. \(UXY\) is definable in FOL only for one-place predicates \(P\) and \(Q\) (or for predicates of higher arity when the variable in their last argument is bound). Thus, in general, neither \(U\) nor the combinators are definable in FOL.

The elimination of bound variables goes beyond the resources of FOL. The combinators are not only undefinable, but they are new kinds of objects—which are absent from FOL itself. Also, the intermediate steps of the bound variable elimination procedure presuppose that functions of several arguments can be viewed as functions in one variable, and the other way around.[7] Enriching a presentation of FOL with predicate letters that have sufficiently many arguments in the right order would be more or less unproblematic, and it would add objects to the language that would have the same sort of interpretation as other predicates. A potential problem though is that for each predicate, infinitely many (\(\aleph_0\) many) new predicates would be needed—together with axioms stipulating the intended equivalences between the meanings of the variants of the predicates. Notationally, these steps amount to padding predicate symbols with extra arguments, omitting some arguments, as well as permuting and regrouping the arguments. Although some of these additions may look superfluous or too fussy, for the understanding of Schönfinkel’s procedure to eliminate bound variables, it is crucial to note that formulas are viewed as structured strings of symbols.[8]

In conclusion to this section, it is important to emphasize that there are no questions of consistency with respect to the above reduction process, because it can be viewed—or described in contemporary terms—as a well-defined algorithm. It is a completely different issue that if we consider the language of FOL expanded with combinators, then the resulting system is inconsistent, because CL is powerful enough to define the fixed point of any function. The effect of having fixed points for all functions—including truth functions—may be thought to amount to adding certain biconditionals (which may or may not be valid) as axioms. (For instance, Russell’s paradox emerges from the fixed point of the negation connective.) Notably, both FOL and (pure) CL are consistent.

1.3 Alternative approaches: basic logic and predicate functors

In this section we briefly outline two ideas that are related to Schönfinkel’s work or are motivated by his use of combinators in the elimination of bound variables.

Fitch’s metalogic

From the late 1930s, Frederic Fitch worked on a logic that he called

basic logic. The label is motivated by his aim to provide a

framework in which any logic could be formalized. Fitch’s

approach is utterly syntactic (much like

Schönfinkel’s), and “formalization” is to be

understood as encoding a formally described system in

another—not unlike the arithmetization of the syntax in

Gödel’s incompleteness theorem.

In 1942, Fitch introduced a logic that he labeled \(K\). The expressions in \(K\) are formed like combinatory terms by a binary application operation, which is not assumed to be associative. (See the definition of combinatory terms in the next section.) However, the constants of \(K\) do not coincide with the constants of pure CL. Fitch uses 10 constants: \(\varepsilon\), \(o\), \(\acute{\varepsilon}\), \(\acute{o}\), \(W\), \(=\), \(\land\), \(\lor\), \(E\) and \(*\). The first five constants are combinators, though the notation may suggest a different (informal) meaning. ‘\(=\)’ is the syntactical identity of expressions. ‘\(\land\)’ and ‘\(\lor\)’ are intended to stand for “and” and “or.” ‘\(E\)’ is the analogue of Schönfinkel’s \(U\), but it corresponds to a non-vacuous existential quantifier. Finally, ‘\(*\)’ is similar to the transitive closure operator for binary relations or the Kleene star. Notably, there is no negation or universal quantifier in the system. The uses of the constants are characterized as follows—somewhat like axioms characterize combinators.

- \(=ab\) if and only if \(a\) and \(b\) are (syntactically) the same expression

- \(\varepsilon ab\) if and only if \(ba\)

- \(oabc\) if and only if \(a(bc)\)

- \(\acute{\varepsilon} abc\) if and only if \(bac\)

- \(\acute{o} abcd\) if and only if \(a(bc)d\)

- \(Wab\) if and only if \(abb\)

- \(\land ab\) if and only if \(a\) and \(b\)

- \(\lor ab\) if and only if \(a\) or \(b\)

- \(Eb\) if and only if \(\exists a.\,ba\)

- \(*abc\) if and only if \(abc\) and \(\exists d.\,abd\&adc\)

In CL, the axioms are followed up with notions such as one-step and weak reduction, the latter of which can be viewed as a computation or inference step. (See the next section for some of these notions.) Similarly, an axiomatic calculus for FOL, for instance, would contain rules of inference in addition to the axioms. One of the obstacles to penetrate the various presentations of basic logic is the lack of a similar formulation.

During the next two decades or so after his first paper on basic logic, Fitch published a series of papers on basic logic devoted to (1) the representation of recursive functions (i.e., a demonstration of the possibility of the arithmetization of syntax), (2) \(K^\prime\), an extension of \(K\) with negation, universal quantifier and # (the dual of the \(*\) operator), (3) the consistency of \(K\) and \(K^\prime\), (4) \(L\), an extension of \(K^\prime\) with implication and necessity operators, (5) the definability of some of the constants such as \(*\) and #, as well as \(E\).

The combinators that are included in \(K\) (hence, in all its extensions) are \(\textsf{T}\), \(\textsf{B}\) and \(\textsf{W}\). \(\acute \varepsilon\) and \(\acute o\) are the ternary version of \(\textsf{T}\) and the quaternary version of \(\textsf{B}\), respectively. Russell’s paradox involves negation, but (either variant of) Curry’s paradox is positive, in the sense that it relies on one or two theorems of the positive implicational logic of David Hilbert. This means that if the various systems of basic logic, especially \(K^\prime\) and \(L\) are consistent, then they either cannot contain full abstraction, or the notions of implication, entailment and identity should differ from their usual counterparts. Indeed, \(K\), \(K^\prime\) and \(L\) are not extensional systems. That is, even if two expressions applied to the same expression are always equal, the equality of the applied expressions does not follow. Turning basic logic into an extensional system proved less than straightforward. Fitch’s system \(JE^\prime\) was shown to be inconsistent by Myhill, which led to a more complicated formulation of the conditions for extensional identity.

Basic logic has not (yet) become a widely used general framework for the description of formal systems; however, renewed interest in this approach is signaled by Updike (2010), which attempts to situate basic logic in the broader context of foundational work at the middle of the 20th century.

Quine’s elimination strategy

From the late 1930s, W. V. O. Quine worked on an alternative way to

eliminate bound variables from first-order logic. It is plausible to

assume that Schönfinkel’s goal was to find a single

operator in classical logic and then to eliminate the bound

variables—as he claims in Schönfinkel (1924)—rather

than defining an overarching symbolic system to describe all

mathematics. Nonetheless, CL was soon fused with classical logic in a

more free-wheeling fashion, which resulted in an inconsistent

system.

Quine saw the way out of a situation where inconsistency may arise via implicit typing of constants that are to some extent similar to combinators. He called such constants predicate functors, and introduced several groups of them, the last one in Quine (1981).

The most common presentations of FOL stipulate that an \(n\)-place predicate followed by a sequence of \(n\) terms (possibly, punctuated by commas and surrounded by parentheses) is a formula. (This is in contrast with Schönfinkel’s view of formulas and in accordance with the informal and formal interpretations of predicates as \(n\)-ary relations. In other words, FOL does not permit “currying” of predicates or of their interpretations.) Quine subscribes to the view that sequences of terms follow predicates.

Predicate functors are not applicable to each other—unlike the combinators are. This is a point that Quine repeatedly emphasizes. Atomic predicates are the predicates of a first-order language, whereas complex predicates are obtained by applying a predicate functor (of appropriate arity) to predicates (which may be atomic or complex).

The prohibition of self-application together with the use of “flat” sequences of arguments means that infinitely many predicate functors are needed to ensure the elimination of bound variables from all formulas of FOL. To explain the problem quickly: a permutation of a pair of elements that are arbitrarily far apart cannot be ensured otherwise. Just as combinators may be divided into groups based on their effect, Quine was able to select predicate functors that can be grouped together naturally based on their effects. Indeed, the groups of predicate functors are similar to classes of combinators, though Quine’s labels are often sublime. In order to give a concrete example of this alternative approach, we outline a slightly modified version of a set of predicate functors from Quine (1981).

A first-order language with \(\mid^-\) as the only operator is assumed. (\(F\) and \(G\) are metavariables for predicates in the predicate functor language.) \(\wr^n\) \(\textit{Inv}^n\), \(\textit{inv}^n\), \(\textit{Pad}^{n+1}\) and \(\textit{Ref}^n\) are predicate functors, for every \(n\in\omega\). A formula of FOL is rewritten into a formula in a predicate functor language by applications of the following clauses.

- A variable \(x\) and a predicate \(P\) of FOL is \(x\) and \(P\), respectively, in the predicate functor language.

- \(Fx_1x_2\ldots x_n\mid^{x_1}Gx_1x_2\ldots x_n \mathbin{{:}{=}{:}} (F\wr G)x_2\ldots x_n\), where \(x_2,\ldots, x_n\) are distinct from \(x_1\), and \(F\) and \(G\) are followed by the same sequence of variables.

- \(Fx_1x_2\ldots x_n \mathbin{{:}{=}{:}} (\textit{Inv }F)x_2\ldots x_nx_1\)

- \(Fx_1x_2\ldots x_n \mathbin{{:}{=}{:}} (\textit{inv }F)x_2x_1\ldots x_n\)

- \(Fx_2\ldots x_n \mathbin{{:}{=}{:}} (\textit{Pad } F)x_1x_2\ldots x_n\)

- \(Fx_1x_1x_2\ldots x_n \mathbin{{:}{=}{:}} (\textit{Ref }F )x_1x_2\ldots x_n\)

There is an obvious similarity between \(\textit{Ref}\) and \(\textsf{W}\), \(\textit{Pad}\) and \(\textsf{K}\), as well as \(\textit{Inv}\) and \(\textit{inv}\) and various combinators with permutative effects (e.g., \(\textsf{C}\) and \(\textsf{T}\)). If \(\mid^-\) is the only operator in the first-order language, then all formulas, which are not atomic, are almost of the form of the left-hand side expression in 2. What has to be assured is that the side condition is satisfied, and that is where clauses 3–6 enter. Although the various \(n\)-ary versions of \(\wr\), \(\textit{inv}\), \(\textit{Pad}\) and \(\textit{Ref}\) could be conflated (by ignoring unaffected arguments), \(\textit{Inv}\) clearly stands for infinitely many predicate functors, because \(x_1,\ldots,x_n\) cannot be ignored or omitted.

It may be interesting to note that there is a difference between \(\wr\) and Schönfinkel’s \(U\). Not only the place of the bound variable is different, but \(\wr\) builds in contraction for \(n-1\) variables (which are separated by \(\mid^-\) and other symbols in the left-hand expression).

Quine intended the predicate functor language to lead to a novel algebraization of first-order logic. While bound variables can be eliminated using predicate functors, Quine never defined an algebra in the usual sense—something similar, for instance, to polyadic or cylindric algebras. Predicate functors, by design, have a very limited applicability, which has the unfortunate side effect that they seem to be of little interest and not much of use outside their intended context.

2. Combinatory terms and their main properties

2.1 Reduction, equality and their formalizations

The paradoxes that were discovered by Georg Cantor and Bertrand Russell in the late 19th–early 20th century both involve self-membership of a set. The ramified theory of types due to Alfred N. Whitehead and Bertrand Russell, and ZF (the formalization of set theory named after Ernst Zermelo and Abraham A. Fraenkel) exclude self-membership. However, there seems to have been always a desire to create a theory that allows self-membership or self-application. Indeed, one of Curry’s motivations for the development of CL was the goal to construct a formal language that includes a wide range of well-formed expressions, some of which—under certain interpretations—may turn out to be meaningless. (This idea may be compared to the von Neumann–Bernays–Gödel formalization of set theory, in which—without the axiom of foundation—the Russell class can be proved not to be a set, hence, to be a proper class.)

A few natural language examples provide a convenient illustration to clarify the difference between (1), that is a well-formed (but meaningless) expression and (2), which is a meaningful (but ill-formed) sentence. (The meaningfulness of (2), of course, should be taken with a grain of salt. In reality, Kurt Gödel proved the system of PM to be incomplete in 1930. Thus (2) may be guessed—using syntactic and semantics clues—to be a distorted version of (2′) Peano arithmetic was proved to be incomplete by Gödel in 1930.)

- (1)

- The derivative of \(\lambda x\,(x^2+4x-6)\) wishes to declare that functions are smart.

- (2)

- Peano arithmetics prove incomplete with Gödel at 1930.

After these informal motivations, we turn to CL proper and introduce some of its notions a bit more formally.

The objects in CL are called terms.[9] Terms may be thought to be interpreted as functions (as further explained in section 4.1). Primitive terms comprise variables and constants, whereas compound terms are formed by combining terms. Usually, a denumerable set (i.e., a set with cardinality \(\aleph_0\)) of variables is included, and the constants include some (undefined) combinators. (We use \(x,y,z,v,w,u,x_0,\ldots\) as variables in the object language, and \(M,N,P,Q,\ldots\) as metavariables that range over terms.)

Terms are inductively defined as follows.

- (t1)

- If \(x\) is a variable, then \(x\) is a term;

- (t2)

- if \(c\) is a constant, then \(c\) is a term;

- (t3)

- if \(M\) and \(N\) are terms, then (\(MN\)) is a term.

In the above definition, (t3) conceals the binary operation that conjoins the two terms \(M\) and \(N\). This operation is called application, and it is often denoted by juxtaposition, that is, by placing its two arguments next to each other as in (\(MN\)).

Application is not assumed to possess additional properties (such as commutativity), because its intended interpretation is function application. For instance, \(((vw)u)\) and \((v(wu))\) are distinct terms—just as the derivative of \(\lambda x.\,x^2+4x-6\) applied to 8 (that is, (\(\lambda x.\,2x+4)8=20\)) is different from the derivative of 90 (that is, \((8^2+32-6)'=0\)). Using \(\lambda\) notation, the two terms in the example may be expressed as

\[ ((\lambda y.\,y')(\lambda x.\,x^2+4x-6))8 \]vs

\[ (\lambda y.\,y')((\lambda x.\,x^2+4x-6)8). \]If terms are viewed as structured strings (where parentheses show grouping), then the number of distinct terms associated to a string of length \(n\) is the Catalan number \(C_{n-1}\). For a non-negative integer \(n\) (i.e., for \(n\in\mathbb{N}\)),

\[ C_n = \frac{1}{n+1} {2n \choose n}. \]The first seven Catalan numbers are \(C_0=1\), \(C_1=1\), \(C_2=2\), \(C_3=5\), \(C_4=14\), \(C_5=42\) and \(C_6=132\). As an illustration, we may take—for simplicity—strings consisting of \(x\)s, because the terms are to differ only in their grouping. Clearly, if the term is \(x\) or \(xx\), that is of length 1 or 2, then there is only one way to form a term, that is, there exists just one possible term in each case. If we start with three \(x\)s, then we may form \((xx)x\) or \(x(xx)\). If the length of the term is 4, then the five terms are: \(xxxx\), \(x(xx)x\), \(xx(xx)\), \(x(xxx)\) and \(x(x(xx ))\). (It is a useful exercise to try to list the 14 distinct terms that can be formed from 5 \(x\)s.)

The usual notational convention in CL is to drop parentheses from left-associated terms together with the outmost pair. For instance, \(xyz\) would be fully written as \(((xy)z)\), whereas \(xy(xz)\) and \((xy)(xz)\) are both “shorthand versions” of the term \(((xy)(xz))\) (unlike \(xyxz\)). Grouping in terms delineates subterms. For instance, \(xy\) is a subterm of each of the terms mentioned in this paragraph, whereas \(yz\) and \(yx\) are subterms of none of those terms.

Subterms of a term are recursively defined as follows.

- (s1)

- \(M\) is a subterm of \(M\);

- (s2)

- if \(M\) is a subterm of \(N\) or of \(P\), then \(M\) is a subterm of \(NP\).

Incidentally, the notion of free variables is straightforwardly definable now: \(x\) is a free variable of \(M\) iff \(x\) is a subterm of \(M\). The set of free variables of \(M\) is sometimes denoted by \(\textrm{fv}(M)\).

All terms are interpreted as functions, and combinators are functions too. Similarly, to some numerical and geometrical functions, that can be described and grasped easily, the combinators that are frequently encountered can be characterized as perspicuous transformations on terms. (Sans serif letters denote combinators and \(\mathbin{\triangleright_1}\) denotes one-step reduction.)

| \(\textsf{S}xyz \mathbin{\triangleright_1} xz(yz)\) | \(\textsf{K}xy \mathbin{\triangleright_1} x\) | \(\textsf{I}x \mathbin{\triangleright_1} x\) |

| \(\textsf{B}xyz \mathbin{\triangleright_1} x(yz)\) | \(\textsf{T}xy \mathbin{\triangleright_1} yx\) | \(\textsf{C}xyz \mathbin{\triangleright_1} xzy\) |

| \(\textsf{W}xy \mathbin{\triangleright_1} xyy\) | \(\textsf{M}x \mathbin{\triangleright_1} xx\) | \(\textsf{Y}x \mathbin{\triangleright_1} x(\textsf{Y}x)\) |

| \(\textsf{J}xyzv \mathbin{\triangleright_1} xy(xvz)\) | \(\textsf{B}^\prime xyz \mathbin{\triangleright_1} y(xz)\) | \(\textsf{V}xyz \mathbin{\triangleright_1} zxy\) |

These axioms tacitly specify the arity of a combinator as well as their reduction (or contraction) pattern. Perhaps, the simplest combinator is the identity combinator \(\textsf{I}\), that applied to an argument \(x\) returns the same \(x\). \(\textsf{K}\) applied to \(x\) is a constant function, because when it is further applied to \(y\), it yields \(x\) as a result, that is, \(\textsf{K}\) is a cancellator with respect to its second argument. \(\textsf{W}\) and \(\textsf{M}\) are duplicators, because in the result of their application one of the arguments (always) appears twice.[10] \(\textsf{C}\), \(\textsf{T}\) and \(\textsf{V}\) are permutators, because they change the order of some of their arguments. \(\textsf{B}\) is an associator, because \(\textsf{B}xyz\) turns into a term in which \(y\) is applied to \(z\) before \(x\) is applied to the result. \(\textsf{Y}\) is the fixed point combinator, because for any function \(x\), \(\textsf{Y}x\) is the fixed point of that function (see section 2.3). The combinator \(\textsf{B}^\prime\) is an associator and a permutator, whereas \(\textsf{S}\) and \(\textsf{J}\) are also duplicators. \(\textsf{S}\) is very special and it is called the strong composition combinator, because when applied to two functions, let us say, \(g\) and \(f\) (in that order), as well as \(x\), then the resulting term \(gx(fx)\) expresses the composition of \(g\) and \(f\) both applied to the same argument \(x\).

These informal explications did not mention any restrictions on the sort of functions \(x,y,z,f,g,\ldots\) may be. However, the axioms above limit the applicability of the combinators to variables. Intuitively, we would like to say that given any terms, that is, any functions \(M\) and \(N\), \(\textsf{W}MN\) one-step reduces to \(MNN\) (possibly, as a subterm of another term). For example, \(M\) may be \(\textsf{K}\) and \(N\) may be \(yy\), and then \(\textsf{WK}(yy)\triangleright_1\textsf{K}(yy) (yy)\). The latter term suggests a further one-step reduction, and indeed we might be interested in successive one-step reductions—such as those leading from \(\textsf{WK}(yy)\) to \(yy\). A way to achieve these goals is to formalize (a theory of) CL starting with the standard inequational logic but to omit the anti-symmetry rule and to add certain other axioms and rules.

| \(M\triangleright M\) | \(\textsf{S}MNP\triangleright MP(NP)\) | \(\quad\textsf{K}MN\triangleright M\) |

| \(\dfrac{M\triangleright N \quad N\triangleright P}{M\triangleright P}\) | \(\dfrac{M\triangleright N}{MP\triangleright NP}\) | \(\dfrac{M\triangleright N}{PM\triangleright PN}\) |

The use of metavariables encompasses substitution (that we illustrated above on the term \(\textsf{W}MN)\). The identity axiom and the rule of transitivity imply that \(\triangleright\) is a transitive and reflexive relation. The last two rules characterize application as an operation that is monotone in both of its argument places. \(\text{CL}_\triangleright\) includes only \(\textsf{S}\) and \(\textsf{K}\), because the other combinators are definable from them—as we already mentioned in section 1.2, and as we explain more precisely toward the end of this section.

The set of combinators \(\{\textsf{S},\textsf{K}\}\) is called a combinatory base, that is, these two combinators are the undefined constants of \(\text{CL}_\triangleright\). To give an idea of a proof in this calculus, the following steps may be pieced together to prove \(\textsf{SKK} (\textsf{KSK})\triangleright \textsf{S}\). \(\textsf{KSK}\triangleright \textsf{S}\) is an instance of an axiom. Then \(\textsf{SKK}(\textsf{KSK}) \triangleright\textsf{SKKS}\) is obtained by right monotonicity, and further, \(\textsf{SKK}(\textsf{KSK})\triangleright\textsf{S}\) results by instances of the \(\textsf{S}\) and \(\textsf{K}\) axioms together with applications of the transitivity rule.

The relation \(\triangleright\) is called weak reduction, and it may be defined alternatively as follows. (‘Weak reduction’ is a technical term used in CL to distinguish this relation on the set of terms from some other relations, one of which is called ‘strong reduction’.) A term that is either of the form \(\textsf{S}MNP\) or of the form \(\textsf{K}MN\) is a redex, and the leading combinators (\(\textsf{S}\) and \(\textsf{K}\), respectively) are the heads of the redexes. If a term \(Q\) contains a subterm of the form \(\textsf{S}MNP\), then \(Q^\prime\), which is obtained by replacing that subterm by \(MP(NP)\) is a one-step reduct of \(Q\). (Similarly, for the redex \(\textsf{K} MN\) and \(M\).) That is, \(Q\triangleright Q^\prime\) in both cases. Reduction then may be defined as the reflexive transitive closure of one-step reduction. This notion is completely captured by \(\text{CL}_\triangleright\). The calculus \(\text{CL}_\triangleright\) is complete in the sense that if \(M\triangleright N\) in the sense we have just described, then \(\text{CL}_\triangleright\) proves \(M\triangleright N\). (It is easy to see that the converse implication is true too.)

The notion of reduction is a weaker relation than one-step reduction, and so it is useful to distinguish a subclass of terms using the stronger relation. A term is in normal form (nf) when it contains no redexes. Note that one-step reduction does not need to decrease the total number of redexes that a term contains, hence, it does not follow that every term can be turned into a term in nf via finitely many one-step reductions. Indeed, some terms do not reduce to a term in nf.

Reduction is arguably an important relation between terms that denote functions. The typical steps in a program execution and in other concrete calculations are function applications rather than moves in the other direction, what is called expansion. However, the notion of the equality of functions is familiar to everybody from mathematics, and the analogous notion has been introduced in CL too. The transitive, reflexive, symmetric closure of the one-step reduction relation is called (weak) equality. A formalization of equational CL may be obtained by extending the standard equational logic with combinatory axioms and rules characterizing the combinatory constants and the application operation.

| \(M=M\) | \(\textsf{K}MN=M\) | \(\quad\textsf{S}MNP=MP(NP)\quad\) | |

| \(\quad\dfrac{M=N \quad N=P}{M=P}\quad\) | \(\dfrac{M=N}{N=M}\) | \(\dfrac{M=N}{MP=NP}\) | \(\dfrac{M=N}{PM=PN}\) |

The first axiom and the first two rules constitute equational logic. The constants are again the combinators \(\textsf{S}\) and \(\textsf{K}\). Note that \(\text{CL}_=\) could have been defined as an extension of \(\text{CL}_\triangleright\) by adding the rule of symmetry, that would have paralleled the description of the definition of equality from reduction as its transitive, symmetric closure. We chose instead to repeat the inequational axioms and rules with the new notation (and add the rule of symmetry) to make the two definitions self-contained and easy to grasp. The two characterizations of \(=\) coincide—as those of \(\triangleright\) did.

\(\text{CL}_\triangleright\) and \(\text{CL}_=\) share a feature that may or may not be desirable—depending on what sort of understanding of functions is to be captured. To illustrate the issue, let us consider the one-place combinators \(\textsf{SKK}\) and \(\textsf{SK}(\textsf{KK})\). It is easy to verify that \(\textsf{SKK}M\triangleright M\) and \(\textsf{SK}(\textsf{KK})M \triangleright M\). However, neither \(\textsf{SKK}\triangleright \textsf{SK}(\textsf{KK})\) nor \(\textsf{SK}(\textsf{KK})\triangleright \textsf{SKK}\) is provable in \(\text{CL}_\triangleright\); a fortiori, the equality of the two terms in not provable in \(\text{CL}_=\). This means that \(\text{CL}_\triangleright\) and \(\text{CL}_=\) formalize intensional notions of functions, where “intensionality” implies that functions that give the same output on the same input may remain distinguishable.

The archetypical intensional functions that one is likely to encounter are algorithms. As examples, we might think of various specifications to calculate the decimal expansion of \(\pi\), or various computer programs that behave in the same way. For instance, compilers (for one and the same language) may differ from each other by using or not using some optimizations, and thereby, producing programs from a given piece of code that have identical input–output behavior but different run times.

If functions that are indistinguishable from the point of view of their input–output behavior are to be identified, that is, an extensional understanding of functions is sought, then \(\text{CL}_\triangleright\) and \(\text{CL}_=\) have to be extended by the following rule, (where the symbol \(\ddagger\) is to be replaced by \(\triangleright\) or \(=\), respectively).

\[ \frac{Mx\ddagger Nx}{M\ddagger N} \text{ where } x \text{ is not free in } MN. \]2.2 Church–Rosser theorems and consistency theorems

The calculuses \(\text{CL}_\triangleright\) and \(\text{CL}_=\) of the previous section formalize reduction and equality. However, \(\triangleright\) and \(=\) have some further properties that are important when the terms are thought to stand for functions. The next theorem is one of the earliest and best-known results about CL.

Church–Rosser theorem (I). If \(M\) reduces to \(N\) and \(P\), then there is a term \(Q\) to which both \(N\) and \(P\) reduce.

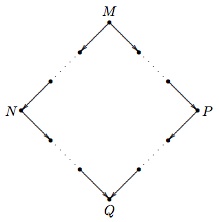

Figure 1. Illustration for the Church–Rosser theorem (I)

If we think that reduction is like computing the value of a function, then the Church–Rosser theorem—in a first approximation—can be thought to state that the final result of a series of calculations with a term is unique—independently of the order of the steps. This is a slight overstatement though, because uniqueness implies that each series of calculations ends (or “loops” on a term). That is, if there is a unique final term, then only finitely many distinct consecutive calculation steps are possible.

A coarse analogy with elementary arithmetic operations, perhaps, can shed some light on the situation. The addition and multiplication of natural numbers always yield a natural number. However, if division is included then it is no longer true that all numerical expressions evaluate to a natural number, since \(7/5\) is a rational number that is not a natural one, and \(n/0\) is undefined (even if \(n\) were real). That is, some numerical expressions do not evaluate to a (natural) number. Although the analogy with combinatory terms is not very tight, it is useful. For instance, \(n/0\) (assuming that the codomain of the function \(\lambda n.\,n/0\) is extended to permit \(r\) to be rational) could be implemented on a computer by a loop (that would never terminate when executed if \(n\ne0\)) which would go through an enumeration of the rational numbers trying to find an \(r\) such that \(r\cdot0=n\).

The combinatory terms \(\textsf{WWW}\) and \(\textsf{WI}(\textsf{WI})\) are, perhaps, the simplest examples of terms that do not have a normal form. Both terms induce an infinite reduction sequence, that is, an infinite chain of successive one-step reductions. To make the example more transparent, let us assume for a moment that \(\textsf{W}\), \(\textsf{I}\), \(\textsf{C}\), etc. are not defined from \(\textsf{K}\) and \(\textsf{S}\), but are primitive constants. The contraction of the only redex in \(\textsf{WWW}\) returns the same term, which shows that uniqueness does not imply that the term is in nf. The contraction of the only redex in \(\textsf{WI}(\textsf{WI})\) gives \(\textsf{I}(\textsf{WI})(\textsf{WI})\) that further reduces to the term we started with. A slightly more complicated example of a term that has only infinite reduction sequences is \(\textsf{Y}(\textsf{CKI})\). This term has a reduction sequence (in which each contracted redex is headed by \(\textsf{Y}\)) that contains infinitely many distinct terms. It is also possible to create infinite reduction sequences that start with \(\textsf{Y} (\textsf{CKI})\) and have various loops too. To sum up, the Church–Rosser theorem, in general, does not guarantee the uniqueness of the term \(Q\). However, if \(M\) has a normal form then that is unique.

The Church–Rosser theorem is often stated as follows.

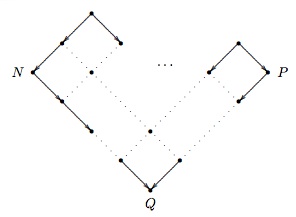

Church–Rosser theorem (II). If \(N\) and \(P\) are equal, then there is a term \(Q\) to which both \(N\) and \(P\) reduces.

Figure 2. Illustration for the Church–Rosser theorem (II)

The second form of the Church–Rosser theorem differs from the first in its assumption. From the definition of equality as a superset of reduction, it is obvious that the first form of the theorem is implied by the second. However, despite the weaker assumption in the second formulation of the Church–Rosser theorem, the two theorems are equivalent. Equality is the transitive, symmetric closure of reduction, which means that if two terms are equal then there is a finite path comprising reduction and expansion steps (which decompose into one-step reductions and one-step expansions, respectively). Then by finitely many applications of the first Church–Rosser theorem (i.e., by induction on the length of the path connecting \(N\) and \(P\)), the first Church–Rosser theorem implies the second formulation.

Modern proofs of the Church–Rosser theorem for CL proceed indirectly because one-step reduction fails to have the diamond property. A binary relation \(R\) (e.g., reduction) is said to have the diamond property when \(xRy\) and \(xRz\) imply that \(yRv\) and \(zRv\) for some \(v\). If a binary relation \(R\) has the diamond property, so does its transitive closure. To exploit this insight in the proof of the Church–Rosser theorem, a suitable subrelation of reduction has to be found. The sought after subrelation should possess the diamond property, and its reflexive transitive closure should coincide with reduction.

The following counterexample illustrates that one-step reductions of a term may yield terms that further do not reduce to a common term in one step. \(\textsf{SKK}(\textsf{KKS})\triangleright_1\textsf{SKKK}\) and \(\textsf{SKK}(\textsf{KKS})\triangleright_1\textsf{K}(\textsf{KKS}) (\textsf{K}(\textsf{KKS}))\), and then some of the potential reduction sequences are as follows.

- \(\textsf{SKKK}\triangleright_1\textsf{KK}(\textsf{KK})\triangleright_1 \textsf{K}\)

- \(\textsf{K}(\textsf{KKS})(\textsf{K}(\textsf{KKS}))\triangleright_1 \textsf{KKS}\triangleright_1\textsf{K}\)

- \(\textsf{K}(\textsf{KKS})(\textsf{K}(\textsf{KKS}))\triangleright_1 \textsf{KK}(\textsf{K}(\textsf{KKS}))\triangleright_1 \textsf{KK}(\textsf{KK})\triangleright_1\textsf{K}\)

- \(\textsf{K}(\textsf{KKS})(\textsf{K}(\textsf{KKS}))\triangleright_1 \textsf{K}(\textsf{KKS})(\textsf{KK})\triangleright_1\textsf{KKS} \triangleright_1\textsf{K}\)

- \(\textsf{K}(\textsf{KKS})(\textsf{K}(\textsf{KKS}))\triangleright_1 \textsf{K}(\textsf{KKS})(\textsf{KK})\triangleright_1\textsf{KK} (\textsf{KK})\triangleright_1\textsf{K}\)

The failure of the diamond property is obvious once we note that \(\textsf{SKKK}\triangleright_1\textsf{KK}(\textsf{KK})\) (only), but \(\textsf{K}(\textsf{KKS})(\textsf{K}(\textsf{KKS}))\) does not reduce in one step to \(\textsf{KK}(\textsf{KK})\).

An appropriate subrelation of reduction is the simultaneous reduction of a set of nonoverlapping redexes, which is denoted by \(\triangleright _\textrm{sr}\). ‘Nonoverlapping’ means that there are no shared subterm occurrences between two redexes. \(\triangleright_\textrm{sr}\) includes \(\triangleright_1\) because a one-step reduction of a redex may be viewed instead as \(\triangleright_\textrm{sr}\) of a singleton set of redexes. \(\triangleright_\textrm{sr}\) is, obviously, included in \(\triangleright\) (i.e., in reduction). These two facts imply that the reflexive transitive closure of \(\triangleright_\textrm{sr}\) is reduction—when the tonicity of the reflexive transitive closure operation (denoted by \(^*\)) is taken into account.

(1)–(3) summarize the key inclusions between the relations mentioned.

- (1)

- \(\triangleright_1\subseteq\triangleright_\textrm{sr}\; \Rightarrow\;\triangleright_1^*\subseteq\triangleright_\textrm{sr}^*\)

- (2)

- \(\triangleright_\textrm{sr}\subseteq\triangleright \;\Rightarrow\;\triangleright_\textrm{sr}^*\subseteq\triangleright^*\)

- (3)

- \(\triangleright_1^*\subseteq\triangleright^*\quad \textrm{ and }\quad\triangleright^*=\triangleright\).

The central property of \(\triangleright_\textrm{sr}\) that we need is the content of the following theorem.

Theorem. (Diamond property for \(\triangleright_\textrm{sr}\)) If \(M\triangleright_\textrm{sr}N\) and \(M\triangleright_\textrm{sr}P\) then there is a term \(Q\) such that both \(N\triangleright_\textrm{sr}Q\) and \(P\triangleright_\textrm{sr}Q\).

The proof of this theorem is an easy induction on the term \(M\). The properties of \(\triangleright_\textrm{sr}\) guarantee that one or more one-step reductions can be performed at once, but the reductions cannot interfere (or overlap) with each other.

The consistency of CL follows from the Church–Rosser theorem together with the existence of (at least two) distinct normal forms.

Theorem. (Consistency) CL is consistent, that is, there are terms that do not reduce to each other, hence they are not equal.

Not all terms have an nf, however, many do. Examples, first of all, include \(\textsf{S}\) and \(\textsf{K}\). (The variables, if included, of which there are \(\aleph_0\) many, are all in nf.) None of these terms contains a redex, hence each reduces only to itself. By the Church–Rosser theorem, it is excluded that some term \(M\) could reduce to both \(x\) and \(\textsf{S}\) (making \(\textsf{S}\) equal to \(x\)).

The interaction between infinite reduction sequences and nfs deserves a more careful inspection though. The terms \(\textsf{WWW}\), \(\textsf{Y} (\textsf{CKI})\) and \(\textsf{WI}(\textsf{WI})\) have only infinite reduction sequences. However, the existence of an infinite reduction sequence for a term does not imply that the term has no normal form (when the combinatory base is complete or contains a cancellator). \(\textsf{Y} (\textsf{KI})\) reduces to \(\textsf{KI}(\textsf{Y}(\textsf{KI}))\), \(\textsf{KI}(\textsf{KI}(\textsf{Y}(\textsf{KI})))\), \(\textsf{KI} (\textsf{KI}(\textsf{KI}(\textsf{Y}(\textsf{KI})))),\ldots\) as well as to \(\textsf{I}\).

A term weakly normalizes when it has an nf, whereas a term strongly normalizes when all its reduction sequences lead to an nf (hence, to the nf) of the term. A computational analogue of a strongly normalizing term is a (nondeterministic) program that terminates on every branch of computation, whereas termination on at least one branch is akin to weak normalization.

The importance of normalization led to a whole range of questions (and an extensive literature of answers). How does the order of the reduction steps (i.e., a reduction strategy) affect finding the nf (if there is one)? Are there combinatory bases that guarantee the existence of normal forms for every combinator over that base? To quickly illustrate possible answers to our sample questions, we start with noting that if there is no combinator with a duplicative effect in a base, then all combinators over that base strongly normalize. This is a very easy answer, and as a concrete base, we could have, for example, \(\{\textsf{B},\textsf{C},\textsf{K}\}\) or \(\{\textsf{B}, \textsf{C},\textsf{I}\}\), which have some independent interest in view of their connection to simply typed calculuses. However, these bases are far from being combinatorially complete and even a fixed point combinator is undefinable in them.

We could ask a slightly different question: If we start with the base \(\{\textsf{S},\textsf{K}\}\) and we omit \(\textsf{S}\), then we get the base \(\{\textsf{K}\}\) and all the combinators strongly normalize, but what if we omit \(\textsf{K}\)? Do the combinators over \(\{\textsf{S}\}\) strongly normalize or at least normalize? The answer is “no.” A term (discovered by Henk Barendregt in the early 1970s) that shows the lack of strong normalization is \(\textsf{SSS}(\textsf{SSS})(\textsf{SSS})\). The first \(\textsf{S}\) is the head of a (indeed, the only) redex, and the head reduction sequence of this term is infinite. Since \(\{\textsf{S}\}\) does not contain any combinator with a cancellative effect, the existence of an infinite reduction sequence for a term means that the term has no nf. There are shorter combinators over the base \(\{\textsf{S}\}\) without an nf, for example, \(\textsf{S}(\textsf{SS})\textsf{SSSSS}\) comprises only eight occurrences of \(\textsf{S}\).

The sorts of questions we illustrated here (or rather, the answers to them) can become a bit technical, because they often involve concepts and techniques from graph theory, automata theory and the theory of term-rewriting.

2.3 The existence of fixed points and combinatorial completeness

Schönfinkel proved that \(\textsf{S}\) and \(\textsf{K}\) suffice to define the other combinators he introduced, and we mentioned in the definition of \(\text{CL}_\triangleright\) that the set of constants is limited to \(\textsf{S}\) and \(\textsf{K}\), because other combinators could be defined from those.

To demonstrate the sense in which definability is understood here we consider the example of \(\textsf{B}\). The axiom for \(\textsf{B}\) is \(\textsf{B} xyz\triangleright_1x(yz)\), and if we take \(\textsf{S}(\textsf{KS}) \textsf{K}\) instead of \(\textsf{B}\), then the following reduction sequence results.

\[ \textsf{S}(\textsf{KS})\textsf{K}xyz\triangleright_1\textsf{KS}x (\textsf{K}x)yz\triangleright_1\textsf{S}(\textsf{K}x)yz\triangleright_1 \textsf{K}xz(yz)\triangleright_1x(yz) \]The term \(\textsf{S}(\textsf{KS})\textsf{K}\) is in nf, however, to be in nf is not a requirement for definability. It is more convenient to work with defining terms that are in nf, because an application of a combinator that is not in nf could be started with reducing the combinator to its normal form. (Also, there are always infinitely many combinators that reduce to a combinator.) However, note that the preference for choosing combinators in nf is not meant to imply that a combinator cannot be defined by two or more terms in nf; below we give two definitions (involving only \(\textsf{S}\) and \(\textsf{K}\)) for \(\textsf{I}\).

If the constants are \(\textsf{S}\) and \(\textsf{K}\), then the combinators are all those terms that are formed from \(\textsf{S}\) and \(\textsf{K}\) (without variables). Once we have defined \(\textsf{B}\) as \(\textsf{S}(\textsf{KS})\textsf{K}\), we may use \(\textsf{B}\) in further definitions as an abbreviation, and we do that primarily to reduce the size of the resulting terms as well as to preserve the transparency of the definitions.

The following list gives definitions for the other well-known combinators that we mentioned earlier. (Here ‘\(=\)’ is placed between a definiendum and a definiens.)

| \(\textsf{I}=\textsf{SK}(\textsf{KK})\) | \(\textsf{T}=\textsf{B}(\textsf{SI})\textsf{K}\) | \(\textsf{C}=\textsf{B}(\textsf{T}(\textsf{BBT}))(\textsf{BBT})\) |

| \(\textsf{W}=\textsf{CSI}\) | \(\textsf{M}=\textsf{SII}\) | \(\textsf{Y}=\textsf{BW}(\textsf{BB}^\prime\textsf{M})(\textsf{BW}(\textsf{BB}^\prime\textsf{M}))\) |

| \(\textsf{V}=\textsf{BCT}\) | \(\textsf{B}^\prime=\textsf{CB}\) | \(\textsf{J}=\textsf{W}(\textsf{BC}(\textsf{B}(\textsf{B}(\textsf{BC}))(\textsf{B}(\textsf{BB})(\textsf{BB}))))\) |

The definitions are easily seen to imply that all these combinators depend on both \(\textsf{S}\) and \(\textsf{K}\), but it is not obvious from the definitions that the defined combinators are mutually independent, that is, that none of the listed combinators is definable from another one. (Clearly, some subsets suffice to define some of the combinators.) We do not intend to give an exhaustive list of interdefinability between various subsets of these combinators, but to hint at the multiplicity and intricacy of such definitions, we list a handful of them. We also introduce two further combinators \(\textsf{S}^\prime\) and \(\textsf{R}\).

| \(\textsf{I}=\textsf{SKK}\) | \(\textsf{I}=\textsf{WK}\) | \(\textsf{I}=\textsf{CK}(\textsf{KK})\) |

| \(\textsf{B}=\textsf{CB}^\prime\) | \(\textsf{S}^\prime=\textsf{CS}\) | \(\textsf{S}=\textsf{CS}^\prime\) |

| \(\textsf{W}=\textsf{S}^\prime\textsf{I}\) | \(\textsf{W}=\textsf{B}(\textsf{T}(\textsf{BM}(\textsf{BBT})))(\textsf{BBT})\) | \(\textsf{W}=\textsf{C}(\textsf{S}(\textsf{CC})(\textsf{CC}))\) |

| \(\textsf{R}=\textsf{BBT}\) | \(\textsf{Y}=\textsf{BM}(\textsf{CBM})\) | \(\textsf{Y}=\textsf{B}^\prime(\textsf{B}^\prime\textsf{M})\textsf{M}\) |

If the fixed point combinator \(\textsf{Y}\) is not taken to be a primitive, then there are various ways to define it—so far, we have listed three.

Fixed point theorem. For any function \(M\), there is a term \(N\) such that \(MN = N\).

The proof of this theorem is easy using a fixed point combinator, because a term that can play the rôle of \(N\) is \(\textsf{Y}M\).

Some of the definitions of \(\textsf{Y}\) have slightly different properties with respect to reduction. But the importance of the fixed point combinator is that it ensures that all functions have a fixed point and all recursive equations can be solved.

Both Haskell B. Curry and Alan Turing defined fixed point combinators (in CL or in the \(\lambda\)-calculus). If we consider the definitions

\[ \textsf{Y}_1=\textsf{BM}(\textsf{BWB}) \quad \textsf{Y}_2=\textsf{W}(\textsf{B}(\textsf{BW}(\textsf{BT})))(\textsf{W}(\textsf{B}(\textsf{BW}(\textsf{BT})))) \](where the subscripts are added to distinguish the two definitions), then we can see that \(\textsf{Y}_1 M=M(\textsf{Y}_1M)\), but for \(\textsf{Y}_2\), \(\textsf{Y}_2M\triangleright M(\textsf{Y}_2M)\) holds too. In this respect, \(\textsf{Y}_1\) is similar to Curry’s fixed point combinator (and really, to any fixed point combinator), whereas \(\textsf{Y}_2\) is like Turing’s fixed point combinator.

The fixed point theorem demonstrates—to some extent—the expressive power of CL. However, fixed point combinators may be defined from bases without a cancellator (as \(\textsf{Y}_1\) and \(\textsf{Y}_2\) show). The full power of CL (with the base \(\{\textsf{S},\textsf{K}\}\)) is enunciated by the following theorem.

Theorem. (Combinatorial completeness) If \(f(x_1,\ldots,x_n)= M\) (where \(M\) is a term containing no other variables than those explicitly listed), then there is a combinator \(\textsf{X}\) such that \(\textsf{X} x_1\ldots x_n\) reduces to \(M.\)

The theorem’s assumption may be strengthened to exclude the possibility that some occurrences of \(x\) do not occur in \(M\). Then the consequent may be strengthened by adding the qualification that \(\textsf{X}\) is a relevant combinator, more specifically, \(\textsf{X}\) is a combinator over \(\{\textsf{B},\textsf{W}, \textsf{C},\textsf{I}\}\) (a base that does not contain a combinator with cancellative effect), or equivalently, \(\textsf{X}\) is a combinator over \(\{\textsf{I},\textsf{J}\}\). (These bases correspond to Church’s preferred \(\lambda\textsf{I}\)-calculus.)

Combinatorial completeness is usually proved via defining a “pseudo” \(\lambda\)-abstraction (or bracket abstraction) in CL. There are various algorithms to define a bracket abstraction operator in CL, that behaves as the \(\lambda\) operator does in a \(\lambda\)-calculus. This operator is usually denoted by \([\,]\) or by \(\lambda^*\). The algorithms differ from each other in various aspects: (i) the set of combinators they presuppose, (ii) the length of the resulting terms, (iii) whether they compose into (syntactic) identity with the algorithm that translates a combinatory term into a \(\lambda\)-term, and (iv) whether they commute with either of the reductions or equalities.

The first algorithm, the elements of which may already be found in Schönfinkel (1924), consists of the following clauses that are applied in the order of their listing.

\[\begin{align} \tag{k} &[x].\,M=\textsf{K}M, \text{ where } x\notin\textrm{fv}(M) \\ \tag{i} &[x].\,x=\textsf{I} \\ \tag{\(\eta\)} &[x].\,Mx=M, \text{ where } x\notin\textrm{fv}(M) \\ \tag{b} &[x].\,MN=\textsf{B}M([x].\,N), \text{ where } x\notin\textrm{fv}(M) \\ \tag{c} &[x].\,MN=\textsf{C}([x].\,M)N, \text{ where } x\notin\textrm{fv}(N) \\ \tag{s} &[x].\,MN=\textsf{S}([x].\,M)([x].\,N) \end{align}\]For example, if this algorithm is applied to the term \(\lambda xyz.x(yz)\) (that is, to the \(\lambda\)-translation of \(\textsf{B}\)), then the resulting term is \(\textsf{B}\). However, if \(\eta\) is omitted then a much longer term results, namely, \(\textsf{C}(\textsf{BB}(\textsf{BBI}))(\textsf{C} (\textsf{BBI})\textsf{I})\). Another algorithm, for example, consists of clauses (i), (k) and (s).

3. Nonclassical logics and typed CL

3.1 Simple types

Combinatory terms are thought of as functions, and functions are thought to have a domain (a set of possible inputs) and a codomain (a set of possible outputs). For example, if a unary function is considered as a set of ordered pairs, then the domain and codomain are given by the first and second projections, respectively. If partial and non-onto functions are permitted, then supersets of the sets resulting from the first and second projections can also be domains and codomains. Category theory, where functions are components of categories (without a set theoretic reduction assumed), retains the notions of a domain and a codomain; moreover, every function has a unique domain and codomain.

Functions that have the same domain and codomain may be quite different, however, by abstraction, they are of the same sort or type. As a simple illustration, let \(f_1\) and \(f_2\) be two functions defined as \(f_1= \lambda x.\,8\cdot x\) and \(f_2=\lambda x.\,x/3\). If \(x\) is a variable ranging over reals, then \(f_1\) and \(f_2\) have the same domain and codomain (i.e., they have the same type \(\mathbb{R}\rightarrow\mathbb{R}\)), although \(f_1\ne f_2\), because \(f_1(x)\ne f_2(x)\) whenever \(x\ne0\). The usual notation to indicate that a function \(f\) has \(A\) as its domain and \(B\) as its codomain is \(f\colon A\rightarrow B\). It is a happy coincidence that nowadays ‘\(\rightarrow\)’ is often used in logics as a symbol for entailment or (nonclassical) implication.

Given a set of basic types (that we denote by \(P\)), types are defined as follows.

- If \(p\in P\) then \(p\) is a type;

- if \(A,B\) are types then \((A\rightarrow B)\) is a type.

To distinguish these types from other types—some of which are introduced in the next section—they are called simple types.

The connection between combinators and types may be explained on the example of the identity combinator. Compound combinatory terms are formed by the application operation. Premises of modus ponens can be joined by fusion (denoted by \(\circ\)), which is like the application operation in the strongest relevance logic \(B\). \(\textsf{I}x \triangleright x\) and so if \(x\)'s type is \(A\), then \(\textsf{I}x\)'s type should imply \(A\). Furthermore, \(\textsf{I}x\)'s type should be of the form \(X\circ A\), for some type \(X\); then \(\textsf{I}\) can be of type \(A\rightarrow A\). In the example, we fixed \(x\)'s type, however, \(\textsf{I}\) can be applied to any term, hence, it is more accurate to say that \(A\rightarrow A\) is the type schema of \(\textsf{I}\), or that \(\textsf{I}\)'s type can be any formula of the form of self-implication.

The type-assignment system TA\(_\textrm{CL}\) is formally defined as the following deduction system. (When implicational formulas are considered as types, the usual convention is to omit parentheses by association to the right.)

\[ \Delta\vdash\textsf{S}\colon(A\rightarrow B\rightarrow C)\rightarrow (A\rightarrow B)\rightarrow A\rightarrow C \] \[ \Delta\vdash\textsf{K}\colon A \rightarrow B\rightarrow A \] \[ \frac{\Delta\vdash M\colon A\rightarrow B \quad\Theta\vdash N\colon A} {\Delta,\Theta\vdash MN\colon B} \]Expressions of the form \(M\colon A\) above are called type assignments. A characteristic feature of type-assignment systems is that if \(M\colon A\) is provable then \(A\) is considered to be one of the types that can be assigned to \(M\). However, a provable assignment does not preclude other types from becoming associated to the same term \(M\), that is a type assignment does not fix the type of a term rigidly. \(\Delta\) and \(\Theta\) on the left-hand side of \(\vdash\) are sets of type assignments to variables, and they are assumed to be consistent—meaning that no variable may be assigned two or more types.

Type assignment systems are often called Curry-style typing systems. Another way to type terms is by fixing a type for each term, in which case each term has exactly one type. Such calculuses are called Church-style typing systems. Then, for example, the identity combinator \(\textsf{I}\) of type

\[ (A\rightarrow A\rightarrow A)\rightarrow A\rightarrow A\rightarrow A \]is not the same as the identity combinator \(\textsf{I}\) of type

\[ ((B \rightarrow B)\rightarrow B)\rightarrow(B\rightarrow B)\rightarrow B. \]The two styles of typing have quite a lot in common, but there are certain differences between them. In particular, no self-application is typable in a Church-style typing system, whereas some of those terms can be assigned a type in a Curry-style typing system. Curry-style typing systems proved very useful in establishing various properties of CL and \(\lambda\)-calculuses. The Church-style typing, on the other hand, emulates more closely the typing in certain functional programming languages (without objects).

There is no one-one correspondence between types and combinators in either style of typing: not all combinators can be assigned a type, and some implicational formulas cannot be assigned to any combinatory term. A combinator that can be assigned a type is said to be typable, and a type that can be assigned to a combinator is said to be inhabited. For instance, \(\textsf{M}\) has no (simple) type, because an implicational formula is never identical to its own antecedent. On the other hand, Peirce’s law, \(((A\rightarrow B)\rightarrow A) \rightarrow A\) is not the type of any combinator in the type assignment system TA\(_\textrm{CL}\). Despite (or, indeed, due to) the discrepancy between implicational formulas and combinatory terms, classes of implicational formulas that can be assigned to certain sets of combinatory terms coincide with sets of theorems of some important logics.

Theorem. \(A\rightarrow B\) is a theorem of the intuitionistic implicational logic, denoted by \(IPC_\rightarrow\) or \(J_\rightarrow\), iff for some \(M\), \(M\colon A\rightarrow B\) is a provable type assignment in TA\(_\textrm{CL}\), where the term \(M\) is built from \(\textsf{S}\) and \(\textsf{K}\), that is, \(M\) is a combinator over the base \(\{\textsf{S},\textsf{K}\}\).

A combinator that inhabits an implicational theorem encodes a proof of that theorem in the deduction system TA\(_\textrm{CL}\). There is an algorithm to recover the formulas that constitute a proof of the type of the combinator, moreover, the algorithm produces a proof that is minimal and well-structured. The correspondence between implicational theorems of intuitionistic logic (and their proofs) and typable closed \(\lambda\)-terms (or combinators) is called the Curry–Howard isomorphism. The usual notion of a proof in a Hilbert-style axiomatic system is quite lax, but it can be tidied up to obtain the notion of traversing proofs. In a traversing proof there is a one-one correspondence between subterms of a combinator and the formulas in the traversing proof as well as between applications and detachments therein (cf. Bimbó 2007).

The above correspondence can be modified for other implicational logics and combinatory bases. The next theorem lists correspondences that obtain between the implicational fragments of the relevance logics \(R\) and \(T\) and some combinatory bases that are of interest in themselves.

Theorem. \(A\rightarrow B\) is a theorem of \(R_{\rightarrow}\) (or \(T_{\rightarrow}\)) iff for some \(M\), \(M\colon A\rightarrow B\) is a provable type assignment where \(M\) is a combinator over \(\{\textsf{B}, \textsf{I},\textsf{W},\textsf{C}\}\) (or over \(\{\textsf{B},\textsf{B}^\prime, \textsf{I},\textsf{S},\textsf{S}^\prime\}\)).

The calculus \(\textrm{TA}_\textrm{CL}\) may be amended by adding axiom schemas for the combinators in the two bases. (The axiom schemas of the combinators that are not in these bases may be omitted from the calculus or simply may be neglected in proofs.) The new axioms are as follows.

\[\begin{align*} \textsf{B} &\colon (A\rightarrow B)\rightarrow(C\rightarrow A)\rightarrow C\rightarrow B \\ \textsf{B}^\prime &\colon (A\rightarrow B)\rightarrow(B\rightarrow C)\rightarrow A\rightarrow C \\ \textsf{C} &\colon (A\rightarrow B\rightarrow C)\rightarrow B\rightarrow A\rightarrow C \\ \textsf{W} &\colon (A\rightarrow A\rightarrow B)\rightarrow A\rightarrow B \\ \textsf{S}^\prime &\colon (A\rightarrow B)\rightarrow(A\rightarrow B\rightarrow C)\rightarrow A\rightarrow C \\ \textsf{I} &\colon A\rightarrow A \end{align*}\]The combinatory base \(\{\textsf{B},\textsf{C},\textsf{W},\textsf{I}\}\) is especially interesting, because these combinators suffice for a definition of a bracket abstraction that is equivalent to the \(\lambda\)-abstraction of the \(\lambda\textsf{I}\)-calculus. To put it differently, all functions that depend on all of their arguments can be defined by this base. The other base allows the definition of functions that can be described by terms in the class of the so-called hereditary right maximal terms (cf. Bimbó 2005). Informally, the idea behind these terms is that functions can be enumerated, and then their successive applications should form a sequence in which the indexes are “globally increasing.”

A type assignment has two parts: a term and a formula. The questions whether some term can be assigned a type and whether some type can be assigned to a term are the problems of typability and of inhabitation, respectively. Although these questions may be posed about one and the same set of type assignments, the computational properties of these problems may differ widely.

Theorem. It is decidable if a term \(M\) can be assigned a type, that is, if \(M\) is typable.

The theorem is stated in a rather general way without specifying exactly which combinatory base or which modification of TA\(_\textrm{CL}\) is assumed, because the theorem holds for any combinatory base. Indeed, there is an algorithm that given a combinator decides if the combinator is typable, and for a typable combinator produces a type too. Of course, in the combinatorially complete base \(\{\textsf{S},\textsf{K}\}\) all the combinators are expressible as terms consisting of these two combinators only. However, this assumption is not needed for a solution of typability, though it might provide an explanation for the existence of a general algorithm.

The problem of inhabitation does not have a similar general solution, because the problem of the equality of combinatory terms is undecidable. Given a set of axiom schemas that are types of combinators with detachment as the rule of inference, the problem of the decidability of a logic can be viewed as the problem of inhabitation. Indeed, if \(A\) is an implicational formula, then to decide whether \(A\) is a theorem amounts to determining if there is a term (over the base that corresponds to the axiom schemas) that can be assigned \(A\) as its type. (Of course, a more sophisticated algorithm may actually produce such a term, in which case it is easy to verify the correctness of the claim by reconstructing the proof of the theorem.)

To see from where complications can emerge in the case of decidability, we compare the rule of the formation of terms and the rule of detachment. Given a combinatory base and a denumerable set of variables, it is decidable by inspection whether a term is or is not in the set of the generated terms. That is, all the inputs of the rule are retained in the output as subterms of the resulting term. In contrast, an application of detachment results in a formula that is a proper subformula of the major premise (and in the exceptional case when the major premise is an instance of self-identity it is identical to the minor premise). The lack of the retention of all subformulas of premises through applications of modus ponens is the culprit behind the difficulty of some of the decision problems of implicational logics. It is then somewhat unsurprising that for many decidable logics there is a decision procedure utilizing sequent calculuses in which the cut theorem and the subformula property hold. A solution to the problem of inhabitation may run into difficulties similar to those that arise in decidability problems in general.

For example, the combinator \(\textsf{K}\) can be assigned the following type.